Development of an Integrated Branding Platform Based on Generative AI and a Comparative Usability Study Between Designers and Non-Designers

Abstract

Background Generative artificial intelligence (GenAI) tools have expanded access to brand design, yet existing platforms remain fragmented, requiring users to manually transfer outputs across multiple independent tools for naming, color, logo, and visual identity generation. This fragmentation causes workflow discontinuity and visual inconsistency, undermining the strategic coherence essential to brand identity systems. While multimodal AIGC integration has been explored at the model level, practical platform-level solutions for design workflows remain limited. This study addresses this gap by proposing Branderia, an integrated branding platform built on a data-continuity architecture that automatically propagates outputs across modules to maintain semantic and visual coherence throughout the brand design process.

Methods The research followed a three-phase design process. First, a tool evaluation framework was established using seven metrics (accuracy, creativity, brand alignment, visual quality, consistency, efficiency, integrability) that integrate criteria from AIGC evaluation literature and brand design research. Second, an AI expert study group (n=8) evaluated candidate tools across four categories (naming, color, logo, image) through standardized protocols, selecting Gemini, Huemint, Smashinglogo, and Midjourney for integration into Branderia via a Figma-based prototype. Third, 50 participants (professional designers (n=20) and non-designers (n=30)) completed branding tasks using the platform. Usability was measured across five ISO 9241-11 dimensions (efficiency, effectiveness, ease of use, learnability, satisfaction) using 7-point Likert scales. Task completion times were recorded, and independent samples t-tests were examined between-group differences.

Results The platform achieved strong usability (M=5.61/7.0, Cronbach’s α=0.93) with an average task completion time of 23.86 minutes, substantially reducing time when compared to conventional multi-tool workflows. Both groups produced equivalent output quality (M=5.68) and brand consistency (M=5.50), validating the platform’s democratization goal. However, designers completed tasks 33.2% faster (19.9 vs. 26.5 min) and reported significantly higher ease of use (5.90 vs. 4.63, p<.001) and satisfaction (6.32 vs. 5.39, p<.001), reflecting efficiency gains through mental model alignment with the platform’s sequential workflow. Conversely, non-designers rated practicality higher (5.83 vs. 5.50, p=.032), viewing outputs as final deliverables rather than refinement starting points. These differences reveal expertise-dependent value mechanisms: the platform amplifies efficiency for experts while providing guided scaffolding for non-experts.

Conclusions This study demonstrates that data-continuity-based integration of AIGC tools resolves workflow fragmentation and maintains brand coherence while delivering differentiated value across user groups. The findings establish that output quality parity does not equate to process experience parity, leading to a design principle for integrated AIGC systems: adaptive usability is essential. Future platforms should implement dual-mode architectures (guided scaffolding for non-experts and advanced controls for experts) accessing the same generative backbone to ensure output consistency while optimizing process experience. By reframing the challenge from “what AI can generate” to “how humans orchestrate multiple AI tools coherently,” this study provides actionable guidance for designing multimodal AIGC systems that amplify human creativity.

Keywords:

Generative Artificial Intelligence (GenAI), Artificial Intelligence Generated Content (AIGC), Brand Design, Usability Evaluation, Platform Integration, Design Automation1. Introduction

1. 1. Research Background

In the digital transformation era, AI-generated content (AIGC) rapidly expands its role as creative tool across various fields. With advancement of deep learning and generative language models, AIGC has evolved beyond simple content production to provide results reflecting human creativity and intent (Wu, 2023; Negueruela del Castillo, 2023; Arriagada, 2023).

In the design domain, the emergence of AI tools that generate core visual elements such as logos, colors, and typography has enhanced accessibility for non-designers and brought changes to designer-centered design approaches (Jung & Kim, 2018) (Sun & Park, 2019). However, current AI-based brand design services focus solely on generating individual visual elements, presenting limitations in integrated management. Users must use multiple platforms in parallel (e.g., Logopony) for naming, logo, color, and character generation, and must repeat procedures such as prompt development and input, and concept image uploads for each platform. This fragmented workflow causes inconsistencies in tone and manner between outputs, acting as barriers to brand identity dilution and consistent user experience delivery.

Komara & Juhana (2025) point out that AI-generated content faces challenges in maintaining brand identity consistency, emphasizing the need for human supervision and consistency maintenance strategies. While various branding AI platforms exist, they require significant learning time and have low accessibility for general users without design expertise, and even professionals show low utilization due to lack of consistency. To overcome these issues, organic integration between tools and collaborative structures are necessary. DesignGPT, based on a multi-agent system, demonstrates that deploying multiple AI agents by role for collaboration can enhance design performance and workflow unity compared to individual tool approaches (Ding et al., 2023).

Therefore, this study aims to maintain consistency throughout the entire brand design process by proposing an integrated platform that organically connects individual AIGC tools to enhance efficiency for professionals and accessibility for non-professionals, and to verify its usability improvement and satisfaction.

1. 2. Research Purpose and Questions

This study addresses two interrelated objectives in the context of AIGC-based brand design.

First, it examines whether integrating fragmented AIGC tools into a unified system can substantially improve the coherence and efficiency of the brand design workflow. As discussed in Section 2.3, current AIGC tools operate independently, leading to workflow discontinuity and visual inconsistency. While multimodal integration has been explored at the model level (Shukor et al., 2023; Lu et al., 2023), practical platform-level integration for design tasks remains underexplored. This study proposes Branderia, a data-continuity-based platform that connects naming (Gemini), color (Huemint), logo (Smashinglogo), and image generation (Midjourney) into a seamless workflow where outputs from each stage automatically become inputs for the next.

Second, it investigates how different user groups perceive and derive value from such an integrated environment. This comparative analysis is not simply about measuring “who finds the system easier to use,” but rather about understanding the nature of value orientation—such as expert efficiency versus non-expert accessibility—that emerges in integrated AIGC contexts. Prior research indicates that AIGC serves dual roles: democratizing creativity for non-professionals (Lee, 2022) and amplifying expertise for professionals (Dell’Acqua et al., 2023). However, these roles have been studied primarily in single-tool contexts. In a multi-stage, integrated platform where consistency and workflow continuity are emphasized, it remains unclear whether both groups benefit equally, or whether they experience qualitatively different forms of value. Understanding these differences is critical for designing adaptive interfaces that can optimize user experience based on expertise level—for example, providing guided scaffolding for beginners while offering advanced batch controls for experts.

To address these objectives, the study poses two research questions:

- RQ1 [Technical Validation]

Does integrating fragmented AIGC tools substantially improve the brand design process in terms of coherence and efficiency? - RQ2 [User Value Comparison]

How do professional designers and non-designers differ in the value they derive from an integrated AIGC platform, and what do these differences imply for adaptive interface design?

To answer these questions, we developed a high-fidelity Figma prototype of Branderia and conducted a usability study with 50 participants: professional designers (n=20) and non-designers (n=30). Usability was assessed using ISO 9241-11 metrics (efficiency, effectiveness, ease of use, learnability, satisfaction) via 7-point Likert scales, and independent-samples t-tests examined between-group differences. Task completion times were recorded to measure efficiency, and qualitative observations supplemented quantitative findings.

The evaluation measures using ISO 9241-11-based usability metrics (satisfaction, learnability, efficiency, effectiveness, ease of use) and the System Usability Scale (SUS) (Figure 1).

2. Literature Review

2. 1. Brand Identity as a Strategic System

Brand Identity is not merely a simple collection of individual design elements like logos or colors, but signifies ‘homogeneity, consistency, unity, identity, and subjectivity’ with the brand as a meaning system that consumers experience directly and indirectly (David A. Aaker, 1994). Successful brands are built on semiotic principles. That is, all visual and linguistic elements such as brand names, logos, and colors function as ‘signs’ that convey the brand’s core values, and when these signs deliver consistent messages, strong brand equity is formed (Keller, 2010). (Nakanishi, M. & Son, H., 1997, p.64) viewed symbols, logos, typography, colors, graphic elements, and characters as core elements that visually implement brands.

The strategic brand identity defined above consists of core elements as shown in (Table 1), and these elements secure strategic consistency only when developed within the systematic process presented by Wheeler (2012) as shown in (Table 2).

Prior studies have defined logos that maximize brand symbolism, names that encapsulate brand meaning, slogans that deliver core messages, typography that conveys brand personality, colors that determine emotional and psychological effects, visual images that enable intuitive recognition, and characters that strengthen brand intimacy as core visual elements (Farhana, 2012; Walsh, Winterich, & Mittal, 2010; Keller, 2008; Jun & Lee, 2007; Van den Bosch, De Jong, & Elving, 2005; Stuart & Muzellec, 2004; Alessandri, 2001; Van Riel & Van den Ban, 2001; Melewar & Saunders, 2000; Baker & Balmer, 1997; Keller, 1993; Lambert, 1989). Based on the prior literature, this study classifies and defines seven core visual elements—logo/symbol, name, color, image, slogan, typography, and character.

These core visual elements are implemented as application design at actual brand touchpoints. Wheeler (2012) presents “Creating touchpoints” as the fourth stage of the brand process, emphasizing that brand identity must be consistently applied to various media such as business cards, packages, websites, and signage. Application design is the process of modifying and applying basic brand elements such as logos, colors, and typography according to actual usage contexts (Farhana, 2012; Van den Bosch et al., 2005), serving as a key mechanism for maintaining consistency in brand experience. This study defines this as the stage where brand visual elements are implemented in actual media, and includes it as one of the core outputs of the integrated platform.

Brand design generally consists of three stages: planning, visualization, and deployment. Wheeler (2012) proposed a five-step roadmap for a consistent process from brand strategy development to asset management, comprising “conducting research,” “clarifying strategy,” “designing identity,” “creating touchpoints,” and “managing assets.” This demonstrates that a systematic process is essential for maintaining consistency and integration in brand messaging. Amic G. Ho (2017) established brand strategy based on practical field research results and compared brand processes and design results to concretize the process. Ryu (2023) proposed practice-oriented process: Analysis and Strategy, Visualization, and Identity Building(Table 2).

2. 2. Current Status of AIGC Technology Application in Branding

In the design field, generative AI (AIGC) technology is leading a paradigm shift while performing a dual role (Zan & Jung, 2024) (Younjung, H., 2024).

First, AIGC dramatically improves design accessibility for non-professionals through the ‘Democratization of Creativity’ (Lee, H. K., 2022). It lowers design barriers by enabling the generation of professional-level outputs through prompt inputs without complex design theory or software skills.

Second, AIGC functions as both a ‘Creative Partner’ and ‘Expertise Amplifier’ for skilled professionals (Dell’Acqua et al., 2023; Rezwana & Maher, 2023). According to empirical research by Dell’Acqua et al. (2023), knowledge workers utilizing generative AI showed over 25% improvement in task completion speed, with particularly notable 40% increase in output quality for lower-skilled workers. Skilled designers can use AIGC to rapidly explore ideas (Liu & Chilton, 2022; Oppenlaender, 2022), automate repetitive tasks, and gain new visual inspiration (Brynjolfsson et al., 2023) (Epstein et al., 2023). As Verganti et al. (2020) pointed out, AIGC becomes a tool that maximizes creative potential rather than replacing professionals’ experience and intuition.

Current AIGC tools used in brand design can be classified by function as shown in Table 3. Conversational AIs like ChatGPT and Gemini handle brand naming and slogan generation; Smashinglogo and Looka provide automatic logo and visual element generation; Huemint and Coolors offer color palette suggestions; and Midjourney and DALL·E are responsible for high-resolution image and character generation (Table 3).

These AIGC tools operate as individual platforms without systematic linkage, and while they demonstrate excellent performance, they have limitations in integrated utilization throughout the entire branding process due to the fragmentation issues to be discussed in the next section.

2. 3. Fragmented AIGC Environment and the Need for Integration

With the rapid advancement of Artificial Intelligence Generated Content (AIGC) has led to increasing research on multimodal and system-level integration. Studies on multimodal fusion (Shukor et al., 2023; Li et al., 2024) demonstrate that combining text, image, audio, and video generation enables richer outputs than single-modality approaches. Similarly, Unified-IO 2 (Lu et al., 2023) confirms that integrating multiple modalities—vision, language, audio, and action—improves model scalability and performance. Yin et al. (2024) further emphasize that unified architectures and joint learning pipelines will be essential for the future evolution of AIGC technologies.

Despite these advancements, AIGC tools commonly used in brand design remain highly fragmented. Most operate as independent services optimized for specific outputs, resulting in several structural limitations:

(1) Workflow Discontinuity

Because tools lack interoperability, outputs cannot be reused automatically across platforms (Patel et al., 2024). Users must repeatedly craft prompts, re-upload reference materials, and manually transfer results between tools, disrupting workflow continuity and lowering overall efficiency.

(2) Visual Style Inconsistency

Differences in generative models lead to inconsistent tone and manner, even when identical prompts are used. For instance, Midjourney and DALL·E generate visually divergent results, making it difficult to maintain a coherent brand image (Cheon et al., 2024). Komara & Juhana (2025) highlight that such inconsistency challenges brand identity management without additional human intervention.

These issues—workflow discontinuity and style inconsistency—directly undermine the strategic coherence required in branding (Section 2.1). For non-experts, fragmented tools create steep learning curves and low accessibility, while professionals struggle to maintain cross-tool consistency. This results in what can be described as design fragmentation, a structural barrier preventing efficient and unified branding work.

Recent research on multi-agent design systems, such as DesignGPT (Ding et al., 2023), suggests that coordinated collaboration between specialized AI agents can improve workflow unity compared to isolated tool usage. This supports the need to examine whether an integrated AIGC environment can enhance branding processes, forming the basis of RQ1.

To date, integrated AIGC branding platforms have been limited due to heterogeneous input formats, incompatible output structures, restricted APIs, and divergent style-generation algorithms. Branderia addresses these limitations through a data-continuity architecture that standardizes intermediate outputs and routes them across modules via a central hub. This design allows naming, color, logo, and character modules to operate collaboratively while preserving semantic and visual coherence throughout the branding workflow.

2. 4. Differences in AIGC Utilization According to User Expertise

As discussed in Section 2.2, AIGC provides different value according to users’ expertise levels. These differential characteristics can cause different utilization patterns and satisfaction levels by user group in an integrated platform environment.

Prior studies have reported that perceptions and preferences for AI tools differ according to expertise. While non-professionals prioritize immediate generation possibilities and ease of use, professionals place greater emphasis on customization possibilities, interactive performance, and the tendency to view outputs as starting points for exploration (Rezwana & Maher, 2023; Oppenlaender, 2022).

However, existing studies mostly focused on single AI tool usage contexts, and differences according to expertise in platform environments integrating multiple AIGC tools have not been sufficiently verified. Particularly in complex creative activities involving multi-stage processes like brand design, there is insufficient research on how the consistency and workflow continuity provided by integrated platforms deliver different value to each user group. Therefore, this study aims to compare the value provided to professional and non-professional groups and their differences through RQ2.

Against this background, the comparative study between designers and non-designers in this research is not simply about measuring who finds the system more usable. Rather, it is intended to clarify how an integrated AIGC platform may support distinct value orientations—such as professional efficiency for experts and accessible brand-building for non-experts—and how these differences should inform the design of future adaptive user interfaces.

3. Methodology

3. 1. Research Framework and Process

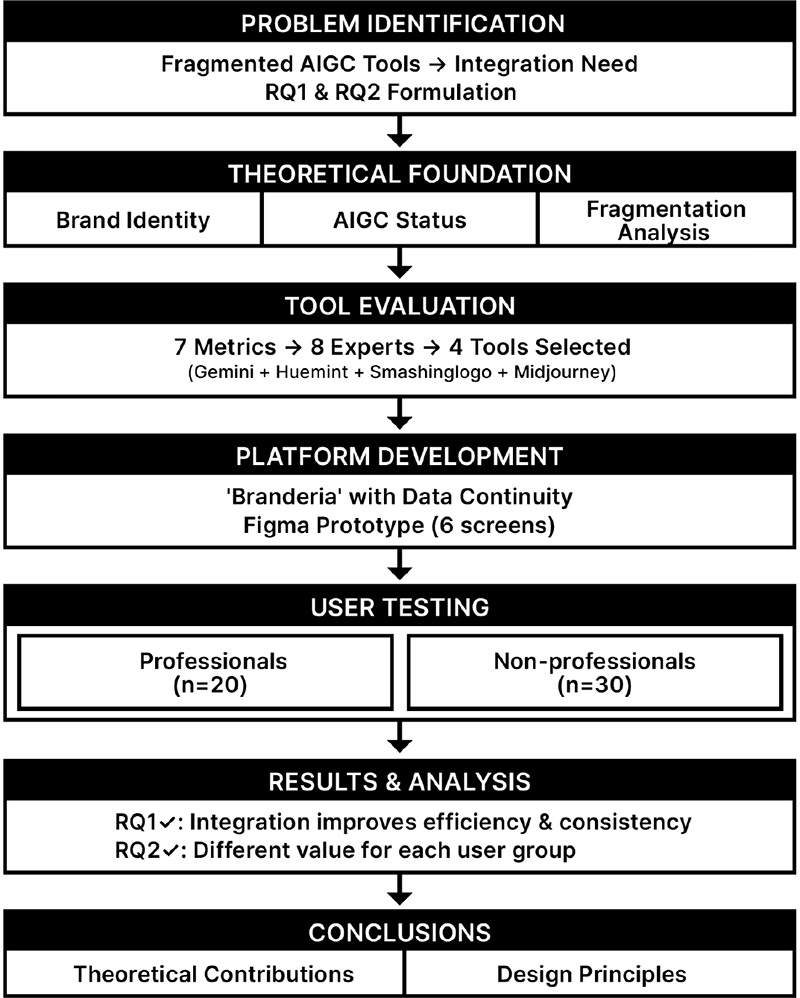

This study confirms the integration possibility of AIGC-based design tools, implements an actual platform, and comparatively evaluates usability by user group. The study proceeded with a 3-stage framework. For this purpose, a 3-stage research framework was established.

Stage 1: Theoretical Review and Tool Classification

Through literature review, we analyzed brand identity systems, AIGC technology status, fragmentation issues, and differences according to user expertise. We identified automatable brand design elements and classified AIGC tools into four categories: naming, color, logo, and image/character.

Stage 2: AIGC Tool Evaluation and Integrated Platform Development

To evaluate brand design output quality, we established 7 evaluation metrics (accuracy, creativity, consistency, brand alignment, visual quality, generation efficiency, integrability) by integrating verified indicators from Henderson & Cote (1998), Keller (2010), and Blijlevens et al. (2017) (see 3.2.1). Eight AI expert study group members evaluated candidate tools for each of the four categories to select optimal tools. We designed the platform ‘Branderia’ integrating the selected tools according to the brand design process and implemented a prototype using Figma (see 3.3).

Stage 3: Comparative Usability Evaluation by User Group

We comparatively evaluated usability by applying the developed platform to two groups: design professionals (n=20) and non-professionals (n=30). We measured 5 ISO 9241-11-based indicators (see 3.4) using a 7-point Likert scale, and measured overall satisfaction with SUS (System Usability Scale). We analyzed data using independent samples t-test to verify RQ1 (Does integration improve the brand design process?) and RQ2 (Do usage value differences exist between professional and non-professional groups?).

3. 2. AIGC Tool Evaluation and Selection

This study established an evaluation framework by comprehensively analyzing AIGC evaluation research (Cao et al., 2023; Zhang et al., 2023; Betzalel et al., 2022). They commonly presented Accuracy, Creativity, and Consistency as core dimensions of AIGC evaluation.

Since brand design requires brand strategy alignment and professional completeness, we added Brand Alignment and Visual Quality based on brand design evaluation research (Keller, 2010; Kohli & Suri, 2000; Blijlevens et al., 2017; Henderson & Cote, 1998). Generation efficiency applied usability evaluation criteria from Nielsen (1994) and Dell’Acqua et al. (2023).

Particularly, as this study aims to develop an integrated platform, we added an Integrability indicator referencing Ding et al. (2023)’s DesignGPT research. Finally, we established 7 core indicators as shown in Table 5.

These seven metrics were selected to reflect both the technical performance of AIGC tools and the specific requirements of branding practice. Accuracy, Creativity, and Visual Consistency are core dimensions frequently emphasized in AIGC evaluation literature. Brand Alignment and Visual Quality capture whether the generated content fits the intended brand concept and meets professional aesthetic standards. Efficiency reflects the practical need to reduce iteration time and effort in real-world design workflows. Finally, Integrability is a critical criterion in this study because the goal is not only to assess individual tools in isolation, but also to determine how well they can be connected into a coherent, multi-stage branding process. The combination of these metrics allows us to evaluate candidate tools from both content-quality and system-integration perspectives.

An AI expert study group of 8 members (5 master’s, 3 doctoral students, majoring in marketing, design, and brand communication) conducted the evaluation. All evaluators had experience experimenting with various AI platforms through completing a 16-week “AI-based Design” course. We investigated the current status of AI-based brand design platforms widely used in the market and provided a list of candidate tools from Section 2.2 (Table 3). Candidate tools for each category (naming, color, logo, image) were tested using the same brand concept, and standardized prompts were developed to conduct 10 repeated experiments per tool.

Subjective indicators (accuracy, creativity, brand alignment, visual quality, integrability) were evaluated by 8 evaluators using a 7-point Likert scale (1=strongly disagree, 7=strongly agree) and averaged. Accuracy was measured through 5 items including prompt reflection and keyword inclusion, while creativity measured originality and freshness according to Torrance (1966) criteria.

Objective indicators (consistency, generation efficiency) were automatically measured. Consistency was measured using CIE Lab color difference (ΔE) for colors and CLIP model cosine similarity for logos and images, then converted to a 7-point scale. Generation efficiency recorded time required and number of attempts, then normalized to a 7-point scale.

Scores for each indicator were summed to calculate a total of 49 points (7 indicators × 7 points), and the highest-scoring tool in each category was finally selected (Table 6).

Gemini received the highest scores in prompt intent reflection rate and brand concept alignment, and provided detailed semantic interpretation for generated names. Huemint received high evaluations in creativity and consistency with its rich visual templates and intuitive interface. Smashinglogo demonstrated strengths in integrability with various customization options and SVG output support. Midjourney showed superior performance compared to other tools in style control capability and visual completeness.

The selected tool combination serves as the core AI modules for this study’s integrated platform ‘Branderia’, establishing a foundation that simultaneously secures brand consistency and work efficiency.

3. 3. Integrated Platform ‘Branderia’ System Architecture and Prototype Development

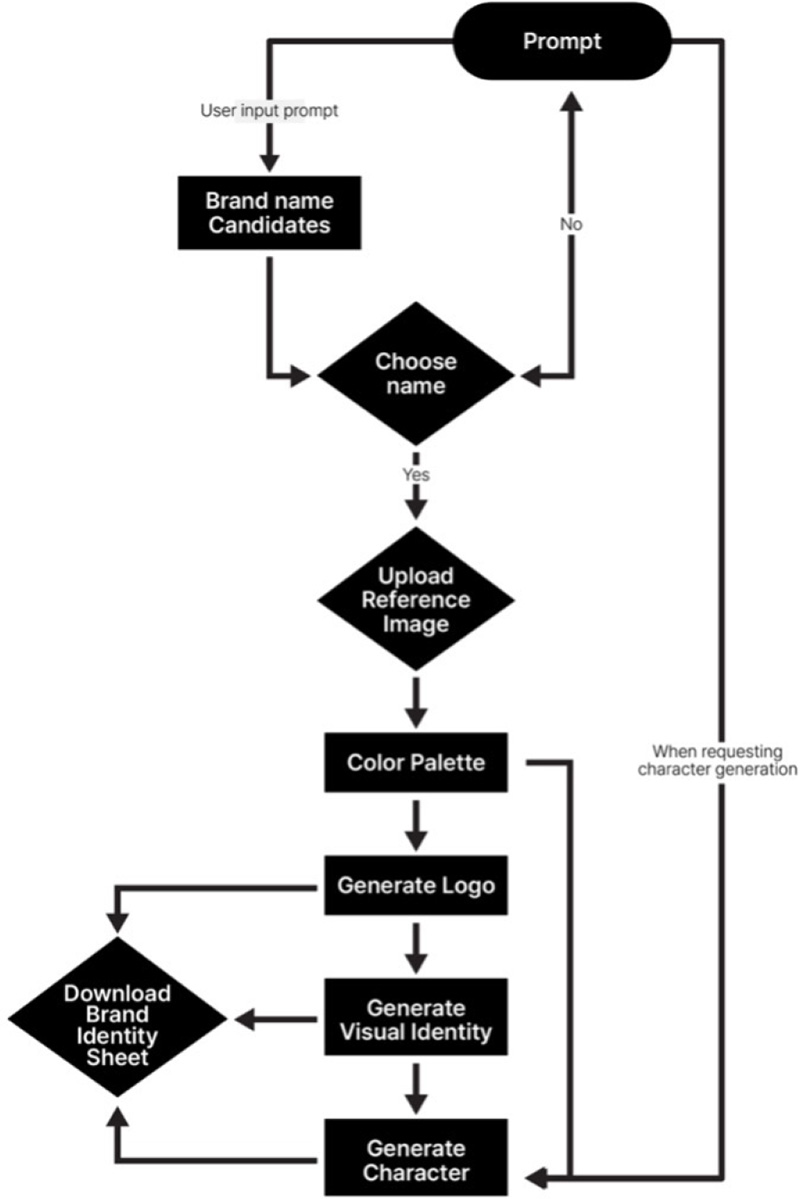

We designed the integrated platform ‘Branderia’ by mapping the selected four AIGC tools (Gemini, Huemint, Smashinglogo, Midjourney) to the brand design process (Planning-Visualization-Deployment). The platform’s core design principle is ‘Data Continuity’, where output data from each stage is automatically transferred as input for the next stage, maintaining consistency.

Figure 2 shows Branderia’s system architecture. Interoperability between the selected AIGC tools is handled through a data-continuity hub implemented at the platform level. Rather than manually exporting and importing assets between tools, Branderia standardizes intermediate results into a shared data schema that can be passed across modules. For example, the brand name, core values, and semantic keywords generated by Gemini are converted into structured text fields, which are then reused as input parameters for Huemint’s color palette generation and Smashinglogo’s logo creation. Similarly, color codes and style descriptors selected in the color module are transferred as constraints for Midjourney in the character and image generation stage. All tools interact through consistently formatted prompts, color codes (e.g., HEX, RGB), and vector or raster file formats (e.g., SVG, PNG), which allows the platform to maintain continuity of brand semantics and visual style across the entire workflow. This design addresses the ‘workflow discontinuity’ and ‘style inconsistency’ issues raised in Section 2.3.

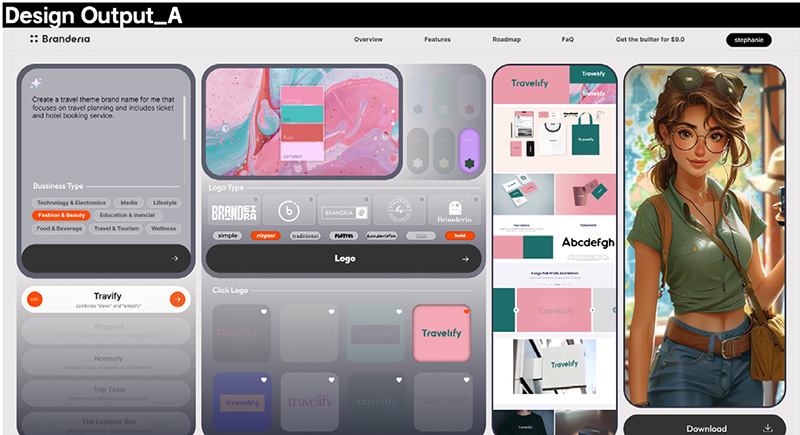

We developed a web-based high-fidelity prototype using Figma. The prototype consists of six main screens: dashboard, naming, color, logo, application design generation, and character generation, completed at an interactive level allowing users to experience the actual brand design process.

Figure 3 shows the main screen for inputting brand keywords, while Figure 4 displays the visualization stage dashboard screen where users can confirm the final brand identity by combining generated logos, color palettes, application design generation, and character generation. The prototype is designed to simulate ‘data continuity’ with each module sharing core brand data, and is utilized to verify the efficacy of the integrated process. The complete interactive prototype is publicly available online1). Readers can directly experience the complete integrated workflow including all AI generation modules and data continuity features described in this study through the prototype.

3. 4. Usability Evaluation Model Design

For platform usability evaluation, we adopted the ISO 9241-11 (1998, 2016)-based usability definition. ISO 9241-11 defines usability as Effectiveness, Efficiency, and Satisfaction, emphasizing the user’s task performance perspective in actual environments. Gould & Lewis (1985) defined usability as the degree to which users can successfully perform desired tasks through the system, emphasizing the incorporation of user perspective feedback from the early stages of system design, and presented Ease of Learning and Ease of Use. Shackel (1991) added flexibility and Attitude, while Nielsen (1994) added Memorability and Errors.

This study designed an evaluation model by comparatively analyzing prior research as shown in Table 7, deriving five core indicators (satisfaction, ease of learning, efficiency, effectiveness, ease of use) suitable for platform characteristics.

3. 5. Platform User Evaluation Design

To verify the usability of the developed Branderia prototype, participants were recruited into two groups according to design expertise. The design professional group (n=20) consisted of graduate students majoring in visual design and designers, with participants selected primarily from those specializing in brand design and visual communication design. The non-professional group (n=30) consisted of general users with brand launching plans or branding needs. A total of 50 participants voluntarily consented after receiving prior explanations about the research purpose, procedures, and data collection scope. The experiment was conducted using a Figma clickable prototype in a desktop environment equipped with 27-32 inch monitors.

It consists of 13 evaluation questions across four domains that have been validated in prior usability evaluation studies(Table 8). The selected evaluation criteria were tailored to be assessable within a Figma-based prototype environment.

The two branding scenarios were selected to represent different types of brand contexts. The “Branderia” startup scenario reflects a technology- and service-oriented brand, whereas the “Travelify” scenario represents a lifestyle- and experience-oriented travel brand. By including Travelify, the study aims to examine whether the integrated platform can support coherent identity building not only for abstract service brands but also for narrative-rich, lifestyle brands that require consistent tone and emotional appeal across visual elements.

The usability evaluation employed a structured three-phase methodology conducted over 60 minutes:

Phase 1 - Pre-task Orientation (10 min): Participants received comprehensive briefing on study objectives and platform tutorial covering core functionalities including brand name generation, color palette creation, logo design, application mockups, character development, and identity sheet preview.

Phase 2 - Task Execution (30 min): Participants selected one of two predetermined branding scenarios (Branderia startup or Travelify travel brand) and completed the entire design workflow. Task completion times were systematically recorded using stopwatch methodology to quantify platform efficiency against conventional design processes. Participants utilized regenerative and editing functions ad libitum throughout the session.

Phase 3 - Post-task Assessment (15 min): Participants completed a standardized usability questionnaire employing 7-point Likert scales and provided qualitative feedback on overall platform satisfaction.

4. Results

4. 1. Overall Usability Assessment

To verify the reliability and validity of the usability evaluation questionnaire, three analyses were conducted using SPSS 26.0: reliability analysis, correlation test, and validity analysis. Cronbach’s alpha was calculated to verify internal consistency, resulting in a score of 0.93, indicating excellent reliability. Pearson correlation analysis revealed statistically significant positive correlations (p < .05) among the four core evaluation dimensions, supporting the construct validity of the instrument.

The overall usability scores for the Branderia platform was 5.61 out of 7 (SD = 0.70), with all evaluation items scoring average scores of 5.0 or higher on a 7-point Likert scale (Table 9). Among these, Efficiency (M = 5.87, SD = 0.64) and Satisfaction (M = 5.76, SD = 0.72) received the highest ratings, indicating strong user satisfaction with the platform’s workflow and overall experience(Table 9), while Ease of Use (M = 5.14, SD = 0.90) was relatively lower but still maintained a high level.

The results of the comparative analysis between groups showed that the designer group scored significantly higher than the non-designer group in all usability evaluation items. In particular, Ease of Use (t = -8.42, p < .001**) and Satisfaction (t = -7.06, p < .001**), Showed high statistical significance. This suggests that while the platform generally possesses universal usability, entry barriers exist for users without design experience, Particularly in prompt input, interface navigation, and interaction complexity. On the other hand, designer users tended to understand the work structure and visual flow more quickly and perform tasks efficiently, demonstrating that the platform is highly suitable for professional users.

In the output quality domain, both designer and non- designer groups recorded nearly identical average values (M = 5.68), with experts showing a statistically significant but practically minimal higher rating (t = -2.68, p = .010, Table 9). This minimal difference indicates that the Branderia platform provides consistently high-quality outputs regardless of user background. In the satisfaction domain, significant differences were also observed. The designer group showed very high satisfaction (M = 6.32, SD = 0.47), while the non-designer group remained at a relatively lower level (M = 5.39, SD = 0.53). The difference between the two groups was statistically significant (t = -7.06, p < .001). In particular, designers evaluated the quality of the results and the overall user experience positively, and also showed a strong intention to reuse the platform in the future. On the other hand, the non-designer group may have been influenced by initial inconveniences in using the platform, which affected their overall satisfaction.

4. 2. Detailed Analysis of Each Usability Dimension

The average task time for all users (N=50) was 23.86 minutes (SD = 3.79), and significant differences in time were observed between user groups. The designer group (n=20) completed all branding stages quickly, with an average of 19.9 minutes (SD = 1.66), while the non-designer group (n=20) took an average of 26.5 minutes (SD = 2.11), requiring approximately 33.2% more time.

In particular, the difference between designers (M = 8.10, SD = 0.65) and non-designers (M = 10.59, SD = 0.78) was most pronounced in the logo design stage, suggesting that the visual judgment and composition skills required in the logo creation process are closely related to design expertise. On the other hand, in the character generation stage, the difference between the two groups was relatively small (non-designers M = 1.44, designers M = 0.90) thanks to the automated system functions.

To analyze user perception differences in terms of the ease of use of the Branderia platform more precisely, this study conducted a statistical analysis of three core sub-items—function recognition, navigation intuition, and learning ease—by group.

The analysis revealed that non-designer users (Beginner) scored significantly lower than designer users in all sub-items, with all differences reaching statistical significance at the p < .001 level.

The overall average for the Function Recognition item was M = 5.58 (SD = 0.76), with the designer group recording M = 6.20 (SD = 0.41) and the non-designer group recording M = 5.17 (SD = 0.65). The t-value is -6.90 (p < .001), indicating that designers tend to grasp the functional structure and purpose of the platform more quickly and clearly. Navigation Intuition had an overall average of M = 5.02 (SD = 0.80), with designers scoring M = 5.75 (SD = 0.44) and non- designers scoring M = 4.53 (SD = 0.57), showing a significant difference between groups (t = -8.45, p < .001). These results demonstrate that design experience significantly influences platform navigation ability, suggesting that UI design elements such as menu structure, button placement, and feature navigation may not be intuitive for non-designers.

Learning Ease scored lowest among non-designers (M = 4.20, SD = 0.66) and strongly supports the claim that design experience significantly influences initial learning ability and adaptation speed on the platform. In particular, some non-designers observed in the study were unable to recognize clickable buttons due to small text, or experienced confusion about the affordance of buttons during actual use.

These results clearly show that non-designers experience difficulties in all three sub-dimensions of usability: interpreting the interface, recognizing how to operate it, and initial learning speed. This indicates that the current platform does not fully meet the design criteria for a “beginner-friendly” product. Future versions should focus on improving the UI/UX by strengthening initial guidance logic, simplifying navigation structures, and enhancing the perceptibility of operational elements. This will help reduce cognitive load for non-designer users and enhance overall user experience and acceptance.

In the Output Satisfaction category, the designer group scored M = 5.85 (SD = 0.37), while the non-designer group scored M = 5.57 (SD = 0.50), indicating that designers evaluated the results more positively. This difference was statistically significant (t = -2.30, p = .026), indicating that designers gave more positive evaluations of the completeness and quality of the output.

The most notable difference was observed in the Aesthetics Completeness category. The designer group scored M = 6.00 (SD = 0.00), while the non-designer group scored M = 5.70 (SD = 0.47). The difference between the groups was statistically significant (t = -3.53, p = .001). This suggests that designers are more sensitive to aesthetic standards and visual consistency, indicating that they found the design outcomes of the platform sufficiently satisfactory.

Interestingly, in the Practicality category, the non-designer group recorded a higher evaluation (M = 5.83 vs. M = 5.50). This difference was statistically significant at t = 2.22, p = .032, indicating that non-designer users placed greater value on the practicality aspect, believing that the actual results could be directly utilized for their business or intended purpose. This result reflects a tendency to prioritize usability over creativity in design.

In the Brand Consistency category, the average scores between designers and non-designers were relatively close (non-designers M = 5.60, designers M = 5.35), and the difference was not statistically significant (t = 1.24, p = .223). However, it is noteworthy that the nondesigners group consistently scored higher on average. This suggests that brand consistency, a key value of integrated branding automation platforms, is positively perceived by both groups, with a tendency toward higher evaluation among non-designers.

In the interface satisfaction domain, the designers group showed a high satisfaction level with an average M = 6.15 (SD = 0.37), while the non- designer group showed a relatively low evaluation with M = 5.40 (SD = 0.50). The difference between the two groups was statistically significant (t = -6.13, p < .001), suggesting that designers have higher standards for visual structure, information layout, and operational responses, and that the platform met their expectations. On the other hand, non-designers may have experienced difficulties adapting to the interface due to unfamiliarity with operational methods and terminology.

The largest t-value (-7.34, p < .001) was observed in the overall satisfaction (Overall) item. The designer group scored M = 6.35 (SD = 0.49), while the non-designer group scored M = 5.17 (SD = 0.65), showing a difference of approximately 1.2 points. This shows that there is a large satisfaction gap between designers and general users in terms of the overall experience, including the functionality, workflow, and visual completeness of the platform.

The intention to reuse the platform was also higher among designers (M = 6.45, SD = 0.51) than among non-designers (M = 5. 60 (SD = 0.62), indicating a significant difference (t = -5.28, p < .001). This suggests that designers are more likely to reuse the platform repeatedly and consistently trust its efficiency and quality. On the other hand, non-designers may have experienced initial barriers to entry and low learning ease, which could have negatively influenced their reuse intention. Overall, users scored the reusability item at 5.94, the highest score among all evaluation items. This suggests that the platform has the potential to provide ongoing value to users.

4. 3. Verification of Research Questions

- RQ1: Does integrating fragmented AIGC tools substantially improve the brand design process in terms of coherence and efficiency?

The empirical results strongly support RQ1. Integration improved the brand design process along three key dimensions.

First, operational efficiency was significantly enhanced. The average task completion time of 23.86 minutes represents a substantial reduction compared to conventional multi-tool workflows. More importantly, the integrated architecture eliminated manual data transfer between tools—such as exporting files, switching platforms, and re-entering prompts—by automating transitions through the data-continuity hub (Section 3.3). This directly addresses the workflow discontinuity problem identified in Section 2.3.

Second, the platform successfully maintained visual and semantic coherence. Both designers and non-designers rated brand consistency highly (M = 5.50), with no statistically significant difference between groups. This near-equivalence indicates that automated propagation of color codes, semantic keywords, and style descriptors effectively preserves coherence without requiring users to manually enforce consistency, resolving the style inconsistency issues typical of isolated AIGC tools.

Third, output quality was comparable across expertise levels (both M = 5.68). This demonstrates that non-designers were able to produce professional-grade branding outcomes, validating the platform’s core goal of democratizing access to coherent brand creation. Collectively, these findings confirm that platform-level integration delivers practical improvements beyond what fragmented AIGC tools can provide.

- RQ2: How do professional designers and non-designers differ in the value they derive from an integrated AIGC platform, and what do these differences imply for adaptive interface design?

The results indicate that Branderia delivers differentiated yet complementary value depending on user expertise.

At the outcome level, both groups benefited equally from the integrated workflow. Output quality and brand consistency ratings were statistically equivalent, and reuse intention was high across all participants. This confirms that the platform provides accessible, high-quality branding support regardless of prior design experience.

However, these similarities mask fundamentally different interaction mechanisms. Designers completed tasks significantly faster and reported higher ease of use and satisfaction. This pattern can be explained by mental model alignment: designers possess internalized schemas of the branding process, and Branderia’s sequential workflow mirrors these structures. As a result, the platform functions as an efficiency amplifier, enabling designers to execute familiar processes with reduced friction.

In contrast, non-designers experienced lower process efficiency and learnability, reflecting higher cognitive load when navigating a multi-stage branding workflow. Nevertheless, nondesigners rated practicality significantly higher than designers. This reversal suggests a difference in expectation frameworks: designers treat AI-generated outputs as intermediate materials for refinement, whereas non-designers perceive them as final, usable solutions. The platform’s guided, step-by-step structure thus serves as scaffolding that compensates for limited domain knowledge by constraining choices and embedding professional logic into the workflow.

These findings resolve the apparent tension between accessibility and usability. Branderia achieves accessibility by enabling non-designers to generate coherent, high-quality brand outputs, while professionals retain efficiency advantages due to transferable expertise. The key implication is that integrated AIGC platforms should not aim for universal usability through a single interface, but for adaptive usability. Guided modes with explanatory support can optimize accessibility for non-designers, while advanced controls and shortcuts can further enhance efficiency for expert users.

In summary, RQ2 demonstrates that an integrated AIGC platform distributes value differently by expertise level—amplifying efficiency for designers through mental model alignment, while providing accessibility for non-designers through guided scaffolding. This differentiated value structure offers actionable principles for the design of future multimodal AIGC systems beyond brand design.

5. Conclusion and Discussion

This study demonstrates that multimodal AIGC integration represents a fundamental architectural shift in creative tool design. Through Branderia’s data-continuity framework—where outputs from naming, color, logo, and character modules are automatically standardized and propagated—we resolved the fragmentation problem that has limited AIGC adoption in professional branding. Empirical validation with 50 users confirmed that this system-level approach maintains both efficiency and coherence while enabling non-designers to achieve professional-grade output quality statistically equivalent to designers.

However, the findings reveal a critical nuance: output quality parity does not equate to process experience parity. Designers completed tasks 33.2% faster and reported significantly higher ease of use (5.90 vs. 4.63, p < .001), reflecting efficiency gains through mental model alignment with the platform’s workflow structure. Conversely, non-designers rated practicality higher (5.83 vs. 5.50, p = .032), valuing the platform’s ability to produce immediately usable deliverables despite lower process efficiency. These differences are not design failures but evidence of expertise-dependent value mechanisms, where the same system generates fundamentally different forms of value depending on user expertise.

This distinction leads to a design principle for integrated AIGC systems: universal usability is insufficient; adaptive usability is essential. Rather than pursuing a single interface for all users, future platforms should implement dual-mode architectures—guided scaffolding for non-experts (contextual tooltips, constrained options, example-based tutorials) and advanced controls for experts (batch operations, granular parameters, shortcut navigation). Both modes access the same generative backbone, ensuring output quality consistency while optimizing process experience.

More broadly, this research reframes the central challenge of generative AI in creative domains. As model capabilities mature, the bottleneck shifts from “what can AI generate?” to “how can humans effectively orchestrate multiple AI tools toward coherent outcomes?” In branding, this means coordinating naming, color, logo, and visual identity generation into a semantically and visually unified system—a challenge addressed through datacontinuity architecture. The same principle applies to adjacent domains: architectural design (coordinating floor plans, elevations, material selections), product design (coordinating form, function, manufacturing constraints), and content creation (coordinating text, image, video across platforms).

By combining a technical blueprint (data-continuity integration) with a design philosophy (adaptive usability), this study provides actionable guidance for building AI systems that amplify human creativity rather than replacing it. The goal is dual empowerment: accelerating expert workflows while democratizing access for novices, enabling both groups to focus on strategic creative decisions rather than operational tool management. As AIGC technologies continue to advance, success will depend less on improving individual models and more on architecting integrated systems that preserve human agency, coherence, and control.

Glossary

1) Branderia Prototype (Accessed: December 2024): https://www.figma.com/proto/zWaE5X1ISLtUDkbQ1lSMOU/%E2%97%8F2024-2-Branding-web-design?page-id=703%3A643&team_id=1301917540023692950&node-id=703-1209&scaling=minzoom&content-scaling=fixed&t=2lRSE9G0ie1DKKrD-1

Notes

Copyright : This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License (http://creativecommons.org/licenses/bync/3.0/), which permits unrestricted educational and non-commercial use, provided the original work is properly cited.

References

-

Aaker, D. A. (1994). Building a brand: The Saturn story. California Management Review, 36(2), 114-133.

[https://doi.org/10.2307/41165748]

- Arriagada, L. (2023). CG-art: An aesthetic discussion of the relationship between artistic creativity and computation [Master's thesis, University of Groningen].

-

Baker, M. J., & Balmer, J. M. (1997). Visual identity: trappings or substance?. European Journal of marketing, 31(5/6), 366-382.

[https://doi.org/10.1108/eb060637]

- Betzalel, E., Penso, C., Navon, A., & Fetaya, E. (2022). A study on the evaluation of generative models. arXiv preprint arXiv:2206.10935.

-

Blijlevens, J., Thurgood, C., Hekkert, P., Chen, L. L., Leder, H., & Whitfield, T. W. (2017). The Aesthetic Pleasure in Design Scale: The development of a scale to measure aesthetic pleasure for designed artifacts. Psychology of Aesthetics, Creativity, and the Arts, 11(1), 86.

[https://doi.org/10.1037/aca0000098]

- Brynjolfsson, E. (2023). The turing trap: The promise & peril of human-like artificial intelligence. In Augmented education in the global age (pp. 103-116). Routledge.

- Cao, Y., Li, S., Liu, Y., Yan, Z., Dai, Y., Yu, P. S., & Sun, L. (2023). A comprehensive survey of AI-generated content (AIGC): A history of generative AI from GAN to ChatGPT. arXiv preprint arXiv:2303.04226.

-

Cheon, M. R., Lee, S. W., & Kim, H. G. (2024). A study on the direction of liberal arts education according to the development of image-generating AI. Journal of General Education Research, 5(2), 5-34.

[https://doi.org/10.37998/LE.2024.5.2.5]

-

Dell'Acqua, F., McFowland III, E., Mollick, E. R., Lifshitz-Assaf, H., Kellogg, K., Rajendran, S., ... & Lakhani, K. R. (2023). Navigating the jagged technological frontier: Field experimental evidence of the effects of AI on knowledge worker productivity and quality. Harvard Business School Technology & Operations Mgt. Unit Working Paper, (24-013).

[https://doi.org/10.2139/ssrn.4573321]

-

Ding, S., Chen, X., Fang, Y., Liu, W., Qiu, Y., & Chai, C. (2023, December). Designgpt: Multi-agent collaboration in design. In 2023 16th International Symposium on Computational Intelligence and Design (ISCID) (pp. 204-208). IEEE.

[https://doi.org/10.1109/ISCID59865.2023.00056]

-

Epstein, Z., Hertzmann, A., Investigators of Human Creativity, Akten, M., Farid, H., Fjeld, J., ... & Smith, A. (2023). Art and the science of generative AI. Science, 380(6650), 1110-1111.

[https://doi.org/10.1126/science.adh4451]

-

Farhana, M. (2012). Brand elements lead to brand equity: Differentiate or die. Information management and business review, 4(4), 223-233.

[https://doi.org/10.22610/imbr.v4i4.983]

-

Gould, J. D., & Lewis, C. (1985). Designing for usability: Key principles and what designers think. Communications of the ACM, 28(3), 300-311.

[https://doi.org/10.1145/3166.3170]

-

Henderson, P. W., & Cote, J. A. (1998). Guidelines for selecting or modifying logos. Journal of marketing, 62(2), 14-30.

[https://doi.org/10.1177/002224299806200202]

-

Ho, A. G. (2017, May). Explore the categories on different emotional branding experience for optimising the brand design process. In International Conference of Design, User Experience, and Usability (pp. 18-34). Cham: Springer International Publishing.

[https://doi.org/10.1007/978-3-319-58637-3_2]

- International Organization for Standardization. (1998). Ergonomic requirements for office work with visual display terminals (VDTs) - Part 11: Guidance on usability (ISO 9241-11:1998).

- International Organization for Standardization. (2016). Ergonomics of human-system interaction - Part 11: Usability: Definitions and concepts (ISO/DIS 9241-11.2:2016).

-

Jeon, E., & Jung, U. (2024). A study on the city brand character design using AIGC: Focused on Huangshan City. Journal of Korean Society of Design Culture, 30(4), 503-513.

[https://doi.org/10.18208/ksdc.2024.30.4.503]

-

Jeong, W.-J., & Kim, S. I. (2018). A study on the role of designer in the 4th industrial revolution: Focusing on design process and A.I based design software. Journal of Digital Convergence, 16(8), 279-285.

[https://doi.org/10.14400/JDC.2018.16.8.279]

- Keller, K. L., Parameswaran, M. G., & Jacob, I. (2010). Strategic brand management: Building, measuring, and managing brand equity. Pearson Education India.

-

Keller, K. L. (1993). Conceptualizing, measuring, and managing customer-based brand equity. Journal of marketing, 57(1), 1-22.

[https://doi.org/10.1177/002224299305700101]

- Keller, K. L. (2008). Brand planning. Utah: Brigham Young University.

-

Kohli, C., & Suri, R. (2000). Brand names that work: A study of the effectiveness of different types of brand names. Marketing Management Journal, 10(2), 112-120.

[https://doi.org/10.63963/001c.150768]

- Komara, I., & Juhana, A. (2025). The Effect of AI-Generated Content on Brand Identity Consistency in Social Media: A Systematic Literature Review. Journal of Mechatronics and Artificial Intelligence, 2(1), 31-44.

-

Lambert, A. (1989). Corporate identity and facilities management. Facilities, 7(12), 7-12.

[https://doi.org/10.1108/eb006515]

-

Lee, H. K. (2022). Rethinking creativity: Creative industries, AI and everyday creativity. Media, Culture & Society, 44(3), 601-612.

[https://doi.org/10.1177/01634437221077009]

-

Li, C., Gan, Z., Yang, Z., Yang, J., Li, L., Wang, L., & Gao, J. (2024). Multimodal foundation models: From specialists to general-purpose assistants. Foundations and Trends® in Computer Graphics and Vision, 16(1-2), 1-214.

[https://doi.org/10.1561/9781638283379]

-

Liu, V., & Chilton, L. B. (2022, April). Design guidelines for prompt engineering text-to-image generative models. In Proceedings of the 2022 CHI conference on human factors in computing systems (pp. 1-23).

[https://doi.org/10.1145/3491102.3501825]

-

Lu, J., Clark, C., Zellers, R., Lee, S., Zhang, Z., Khosla, S., Marten, R., Hoiem, D., & Kembhavi, A. (2023). Unified-IO 2: Scaling autoregressive multimodal models with vision, language, audio, and action. arXiv preprint arXiv:2312.17172.

[https://doi.org/10.1109/CVPR52733.2024.02497]

-

Melewar, T. C., & Saunders, J. (2000). Global corporate visual identity systems: using an extended marketing mix. European journal of marketing, 34(5/6), 538-550.

[https://doi.org/10.1108/03090560010321910]

- Nakanishi, M., & Son, H. (1997). New decomas: CI.BI를 통한 신경영전략 [New Decomas: New management strategy through CI.BI]. Design House. (p. 64).

-

Negueruela del Castillo, D., Schaerf, L., Ballesteros, P., Neri, I., Bernasconi, V. (2023). Newly Formed Cities: an AI Curation. arXiv.

[https://doi.org/10.48550/arXiv.2306.03753]

- Nielsen, J. (1994). Heuristic evaluation. In Usability inspection methods (pp. 25-62).

-

Oppenlaender, J. (2022, November). The creativity of text-to-image generation. In Proceedings of the 25th international academic mindtrek conference (pp. 192-202).

[https://doi.org/10.1145/3569219.3569352]

- Patel, K., Beeram, D., Ramamurthy, P., Garg, P., & Kumar, S. (2024). AI-enhanced design: Revolutionizing methodologies and workflows. International Journal of Artificial Intelligence Research and Development, 2(1), 135-157. https://iaeme.com/Home/article_id/IJAIRD_02_01_013.

-

Radford, A., Kim, J. W., Hallacy, C., Ramesh, A., Goh, G., Agarwal, S., ... & Sutskever, I. (2021, July). Learning transferable visual models from natural language supervision. In International conference on machine learning (pp. 8748-8763). PmLR.

[https://doi.org/10.48550/arXiv.2103.00020]

-

Rezwana, J., & Maher, M. L. (2023, June). User perspectives on ethical challenges in human-AI cocreativity: A design fiction study. In Proceedings of the 15th Conference on Creativity and Cognition (pp. 62-74).

[https://doi.org/10.1145/3591196.3593364]

-

Ryu, S. J. (2023). Designing a process for the usefulness of AI-powered brand design [Master's thesis, Yonsei University, Department of Visual Design].

[https://doi.org/10.31678/SDC100.8]

-

Shackel, B., & Richardson, S. J. (Eds.). (1991). Human factors for informatics usability. Cambridge University Press.

[https://doi.org/10.1017/CBO9780511626114]

- Shukor, M., Dancette, C., Rame, A., & Cord, M. (2023). UnIVAL: Unified model for image, video, audio and language tasks. arXiv preprint arXiv:2307.16184.

-

Stuart, H., & Muzellec, L. (2004). Corporate makeovers: Can a hyena be rebranded?. Journal of Brand Management, 11(6), 472-482.

[https://doi.org/10.1057/palgrave.bm.2540193]

- Sun, C., & Park, S. (2019). A study of an online logo design making platform [Master's thesis, Seoul National University of Science and Technology].

-

Tao, W., Gao, S., & Yuan, Y.-L. (2023). Boundary crossing: An experimental study of individual perceptions toward AIGC. Frontiers in Psychology, 14, 1185880.

[https://doi.org/10.3389/fpsyg.2023.1185880]

- Torrance, E. P. (1966). Torrance tests of creative thinking. Educational and psychological measurement.

-

Van den Bosch, A. L., De Jong, M. D., & Elving, W. J. (2005). How corporate visual identity supports reputation. Corporate Communications: An International Journal, 10(2), 108-116.

[https://doi.org/10.1108/13563280510596925]

-

Van Riel, C. B., & Van den Ban, A. (2001). The added value of corporate logosAn empirical study. European journal of marketing, 35(3/4), 428-440.

[https://doi.org/10.1108/03090560110382093]

-

Verganti, R., Vendraminelli, L., & Iansiti, M. (2020). Innovation and design in the age of artificial intelligence. Journal of product innovation management, 37(3), 212-227.

[https://doi.org/10.1111/jpim.12523]

-

Walsh, M. F., Page Winterich, K., & Mittal, V. (2010). Do logo redesigns help or hurt your brand? The role of brand commitment. Journal of Product & Brand Management, 19(2), 76-84.

[https://doi.org/10.1108/10610421011033421]

-

Westcott Alessandri, S. (2001). Modeling corporate identity: a concept explication and theoretical explanation. Corporate Communications: An International Journal, 6(4), 173-182.

[https://doi.org/10.1108/EUM0000000006146]

- Wheeler, A. (2012). Designing brand identity: An essential guide for the whole branding team (4th ed.). John Wiley & Sons.

-

Woo, J. J., & Lee, H. S. (2007). Cultural differences in brand designs and tagline appeals. International Marketing Review, 24(4), 474-491.

[https://doi.org/10.1108/02651330710761035]

-

Wu, Z.-H., Fan, M., Tang, R.-T., Ji, D.-W., & Mohammad, S. (2023). The art of artificial intelligent generated content for mobile photography. In M. Kurosu & A. Hashizume (Eds.), Human-computer interaction. HCII 2023. Lecture notes in computer science (Vol. 14014, pp. 438-453). Cham: Springer.

[https://doi.org/10.1007/978-3-031-35572-1_29]

- Wu, J., Gan, W., Chen, Z., Wan, S., & Lin, H. (2023). AI-generated content (AIGC): A survey. arXiv. 2304.06632.

-

Yin, S., Fu, C., Zhao, S., Li, K., Sun, X., Xu, T., & Chen, E. (2024). A survey on multimodal large language models. National Science Review, 11(12), nwae403.

[https://doi.org/10.1093/nsr/nwae403]

- Younjung, H., & Yi, W. (2024). A study on brand design methodology using generative AI. International Journal of Advanced Smart Convergence, 13(4), 50-59.

-

Zhang, Z., Li, C., Sun, W., Liu, X., Min, X., & Zhai, G. (2023, July). A perceptual quality assessment exploration for aigc images. In 2023 IEEE International Conference on Multimedia and Expo Workshops (ICMEW) (pp. 440-445). IEEE.

[https://doi.org/10.1109/ICMEW59549.2023.00082]