Music Visualization Using Dynamic Phyllotactic Patterns: A Generative Design Approach with Bézier Curve Mapping

초록

Background Conventional music visualization techniques like spectrograms and particle systems generally rely upon mechanistic algorithmic mappings that inadequately convey the structural coherence and emotional nuances of music. This study explores whether generative patterns drawing on natural forms can surmount these limitations.

Methods We developed a dynamic phyllotaxis-based visualization algorithm combining the spatial optimization model of Vogel and cubic Bézier curve mappings. The system modulates the divergence angle, radial density, and curvature parameterizing spiral patterns in real time with musical characteristics such as rhythmic intensity, spectral centroid, and amplitude envelope. The technical performance was evaluated against baseline models, and a user study (N = 50) was conducted comparing the proposed method with spectrogram-based visualization.

Results The proposed algorithm was found to be both less latent (16 ms vs. 82 ms) and more morphologically diverse than traditional Vogel models. Emotional resonance (4.1/5 vs. 2.9/5), visual engagement (4.4/5 vs. 3.2/5), and music synchronization (4.3/5 vs. 3.5/5) were rated higher by users than the spectrogram control.

Conclusions This study takes a generative approach to music visualization drawing upon phyllotactic patterns to bridge musical structure and visual expression. The findings suggest that nature-inspired generative mechanisms constitute an alternative to conventional visualization methods for creating more engaging audiovisual experiences.

Keywords:

Phyllotactic Patterns, Music Visualization, Vogel’s Formula, Bézier Curves, R-time Visualization1. Introduction

With steady advances in technology, music has ceased being a purely auditory experience, now being presented to audiences in visual form based on the recognized potential of visualization to act as an effective tool to uncover and better understand the structural intricacies of musical compositions (De Prisco et al., 2017). The drive to create integrated audiovisual experiences is encouraged by the brain’s innate biological abilities of multisensory integration, that is, to combine information from the different senses at the level of single neurons into a unified and often enhanced perception (Stein & Stanford, 2008). However, the efficacy of visualization is highly dependent on its design; traditional music visualization techniques and approaches (e.g., spectrograms and particle systems; Lima et al., 2022), while reflecting changes in audio signals in real time, are often highly inefficient at conveying the order, coherence, and rhythmicity that structure the music. These methods are based on direct, mechanistic mappings of signal processing algorithms (Bale et al., 2021) that focus on representing the data immediately without conveying higher-level musical structure and emotional content. Contrariwise, our work falls in the paradigm of artistic data visualization that goes beyond analytical utility to aesthetic engagement, emotional resonance, and open-ended interpretation (Viegas & Wattenberg, 2007).

In this regard, natural systems have evolved to create patterns that are highly ordered and dynamically adaptive. Spiral phyllotactic patterns found in sunflowers, for instance, are the result of efficient, self-organizing processes that optimize spatial packing and the distribution of resources (Owens et al., 2016; Ridley, 1986). These patterns have properties (such as hierarchical organization, coherent growth, and rhythmic periodicity) analogous to the structural and temporal qualities of music. Consequently, nature has provided us with rich aesthetic patterns, like the spiral arrangements of sunflower seeds that inspired the idea that generative mechanisms inspired by natural forms may help connect raw audio signals and meaningful visual expressions that resonate with music form and feeling.

This study proposes a method of music visualization inspired by the phyllotactic patterns observed in nature that employs mathematical modeling and parametric design techniques to generate continuously transforming patterns that vividly express the rhythm and cadence of music. Specifically, we use a curve control technique that enables the continuous modulation of pattern transformations for a real-time mapping between patterns and music signals. In this way, the visual effects directly reflect the dynamic changes in the music, providing an aesthetically pleasing audiovisual experience through an effective rendition of the intrinsic musical structure. In essence, this method goes beyond a simple representation of data to create meaningful audiovisual discourse, reflecting the key relationship in auditory display research between sound (or visuals in our case, driven by sound) and the meaning intended for conveyance (Hermann & Ritter, 2004). Grounding our visualization in the self-organizing and aesthetically harmonious principles of phyllotaxis, we seek to create an intrinsic and intuitive semantic connection between music and its visual counterpart for deeper cognitive and emotional resonance.

This paper first introduces the developmental background of music visualization and the application of natural aesthetics in this domain. It then discusses the mathematical modeling framework and parametric design methodology, and then presents the real-time visualization effects achieved in experiments. Finally, the paper discusses the advantages of this method and its future applications in interactive art and virtual environments. Through this approach, we aim to explore new directions for the visual expression of music, enabling people to experience both auditorily and aesthetically captivating visual dimensions while appreciating the music.

2. Literature Review

2. 1. Music Visualization and Nature Inspiration

The field of music visualization includes a vast repertoire of widely varied techniques for the visual representation of musical data, which have been thoroughly surveyed in the latest literature (Khulusi et al., 2020). Technology for particle analysis has progressed from static spectral analysis (i.e., Fourier Transform) to real-time interactive systems (i.e., particle engines; Lima et al., 2022; Lokki et al., 2002), all of which are intended to enhance the emotional expression and cognitive involvement of music through visual media. Nevertheless, current methods like waveform mapping and particle animation are based primarily upon the direct transformation of signal processing algorithms, and as a result their visual expression is limited by the rules of parameter mapping to the mechanistic (Bale et al., 2021). Examples include color-based visualizations (Ciuha et al., 2010), image-based visualizations (Lehtiniemi and Holm, 2012; Machida and Itoh, 2011; Reeves, 1983), shape-based visualizations (Bergstrom et al., 2007; Carter-Enyi et al., 2021; Miyazaki et al., 2003; Valbom and Marcos, 2007), particle-based visualizations (Fonteles et al., 2013), and fractal representations. Another important type involves the use of mathematical models to create graphical imagery, examples of which combine graphical imagery and mathematical statistics in music visualization (W. Li & Li, 2020). While these methods, including those based on mathematical graphics, demonstrate the technical feasibility of mapping music to form, the methods too often focus on structural or statistical fidelity than on the expression of dynamic, organic, and emotionally resonant qualities. Although 3D particle systems may be responsive to musical rhythms with emitter properties (color, volume), their random burst properties do not easily create visual narratives with bio-organic qualities and restrict the user’s possibilities for deep resonance to musical emotion. This limitation highlights a crucial problem in current research: The temporal dynamics and complexity of music demand visual generating mechanisms with a higher self-organizing capacity.

In recent years, Bioinspired Design has offered a new pathway to resolve this issue. By simulating the dynamic adaptability of natural systems (e.g., phyllotactic spiral growth and fractal branching), researchers have attempted to combine the mathematical principles of biological forms (e.g., the golden angle and Fibonacci sequence) with musical features in order to generate visual expressions that integrate aesthetic harmony with emotional responsiveness (Lokki et al., 2002). However, a key distinction can be drawn within nature-inspired visualization. Most of the current achievements relate to the offline rendering of static natural patterns (e.g., fractal art; Jiang et al., 2019), which mainly mimic the aesthetic result or end form of natural structures for visual purposes. In contrast, there remains a large lacuna in the exploration of dynamic natural mechanisms (e.g., spatial competition models inherent in phyllotaxis; Prusinkiewicz et al., 2001). These mechanisms are the underlying and time-dependent processes and algorithmic rules governing the growth and formation of natural patterns. Importantly, there has thus far not been significant discussion within the field of the coupling of these dynamic mechanisms of nature and real-time musical parameters (rhythm, timbre, and emotional intensity). This gap is significant because, on the one hand, static patterns allow only a limited, predefined visual vocabulary, while on the other hand, dynamic mechanisms can create a generative “engine” able to generate coherent, adaptive, and organically evolving visuals that mirror the temporal and structural dynamics of the music itself. While organic and nature-inspired visual patterns have been successfully used in artistic performances and installations (such as the works of Universal Everything or Andres Wanner), demonstrating that they are aesthetically pleasing, the academic investigation of methods of systematically coupling the dynamic generative mechanisms of nature (as opposed to static forms alone) with real-time music remains limited. This constraint hinders the vitality and expressive dimensions of music visualization systems in interactive scenarios (e.g., live performances and virtual reality), where they might be able to create visuals that, aesthetically speaking, are not only naturalistic, but also inherently and organically responsive to musical structure in real time, much like a living system.

2. 2. Morphogenesis and Natural Pattern Generation

Morphogenesis, a central paradigm in interdisciplinary generative design, seeks to translate performance data (such as environmental constraints and functional requirements) into adaptive geometric forms by simulating the dynamic mechanisms of biological systems (e.g., plant growth; Lintermann & Deussen, 1999). The conceptual origin of this approach lies in botany: Early studies, by analyzing natural patterns like leaf arrangements and root bifurcations, revealed that biological morphogenesis emerges from the synergistic interplay between physical constraints and genetic expression (Gokmen, 2022). For instance, the spiral patterns of plant phyllotaxis have been shown to be optimal solutions to dynamic competition among growth points for spatial resources. Their mathematical representations (e.g., Vogel’s formula, Fibonacci sequence) thus provide a biomimetic foundation for computational generative algorithms (Fowler et al., 1992; Prusinkiewicz & Lindenmayer, 1990). Consequently, morphogenetic research has gradually shifted from biological explanation to design application, for which end it has been used to quantify the self-organizing rules of natural systems (e.g., stress feedback, energy minimization), from which shape search algorithms have been constructed capable of dynamically adapting to input parameters, optimizing layout efficiency, or enhancing information visualization performance (Gokmen, 2022).

Laminar patterns have become a fundamental research topic in the areas of graphics and generative design within the field of bioinspired pattern generation because of their mathematical regularity, visual harmony, and scalability. The logarithmic spirals of pinecone scales and the Fibonacci layout of sunflower seeds are not only characterized by strict geometric constraints (e.g., the golden angle), but the mechanism of their creation is inherently adaptive, following from the responses of organisms to their environment (Fowler et al., 1992; Owens et al., 2016). Nevertheless, although it is possible to reproduce static natural patterns computationally, there are still major problems when integrating them with real-time external stimuli like music.

2. 3. The Sunflower Phytotaxis Model

The seeds in a sunflower capitulum are arranged in a high-density spiral phyllotaxis pattern that adheres to the principle of maximizing nonoverlapping packing efficiency (Owens et al., 2016). Early research employed static planar layouts based on Archimedean spirals (Mathai & Davis, 1974) and Vogel’s formula (Vogel, 1979) to computationally replicate this natural phenomenon using mathematical models. Among these, Vogel’s model has become the benchmark method in computer graphics due to its parameter flexibility and computational reliability.

Furthermore, Vogel’s model has been extended to curved surfaces through geometric topological transformations, including cylindrical (Fowler et al., 1989; Prusinkiewicz & Lindenmayer, 1990) and spherical surfaces (Lintermann & Deussen, 1999; Swinbank & James Purser, 2006; Zeng & Wang, 2009) and more general surfaces of revolution (Ijiri et al., 2005). These extensions enable the mapping of natural phyllotactic patterns into three-dimensional space.

For more precise simulations of plants’ dynamic growth characteristics, the following key models have been proposed:

First, the phyllotactic dynamics framework (Prusinkiewicz et al., 2001) constructs recursive self-similar structures on uniformly growing meristems, elucidating the dynamic competition mechanism underlying directional leaf growth.

Second, growth control (Frenkel et al., 2023; Yeatts, 2004) introduces feedback through hormone concentration gradients and mechanical stress based upon the Voronoi entropy of seed configurations, which are also conceptualized as aesthetic and functionally optimal spiral patterns.

Phyllotactic patterns have a particular mathematical nature that offers new solutions in various fields (data visualization and engineering biomimetic design). In this line of research, Neumann et al. (2006) applied the Vogel model to fractal algorithms to create hierarchical structure visualization tools; Piccini et al. (2011) created 3D radial trajectories that maximize the intertwining properties of cardiac MRI images; Lyu et al. (2021) designed a microheat sink based upon phyllotactic patterns, with experiments demonstrating an 18% improvement in thermal conduction behavior; and Sarradj (2015) used a weighted optimization of microphone array layouts. Nevertheless, the current literature is mostly limited to the field of static applications.

This paper proposes a cubic Bézier weighting algorithm that employs a nonlinear mapping of musical rhythm to curvature to exploit the higher-order continuity of Bézier curves in order to overcome the mechanistic constraints of conventional particle systems (Fonteles et al., 2013).

2. 4. Rationale for Phyllotaxis Selection

Phyllotaxis (and more specifically the sunflower spiral form) was chosen in this study because it is one of the few forms whose natural characteristics include qualities that deeply correspond with the goals of expressive music visualization and, therefore, goes much beyond just reporting a research gap:

Unity of Mathematical Order and Aesthetic Appeal: Phyllotactic patterns are based on rigorous mathematical principles (such as Vogel’s formula and the golden angle). This provides a generative architecture that is able to generate visually harmonious and coherent structures. The intrinsic order resonates with the structural bases of music (e.g., tempo and meter), while the natural aesthetic quality is in line with the purpose of producing emotional responses and realizing visuals.

Inherent Dynamic and Adaptive Growth Mechanism: Importantly, phyllotaxis is not a static pattern but the product of dynamic growth through the spatial competition of primordia on the meristem. This biological mechanism is always responsive and adaptive, and is directly translated in our model into the ability to map dynamic musical features (e.g., rhythmic intensity and emotional arousal) to parameters controlling the “growth” of the pattern (e.g., divergence angle and radial scaling), whereby the visualization grows organically in connection with the music rather than being built from static fractals or prerendered patterns.

Structural Hierarchy and Self-Similarity: Phyllotactic patterns display self-similarity across scales, such that a global spiral structure is made up of smaller similar spirals. This hierarchical nature lends itself to a rich visual metaphor for understanding musical structure, whereby a piece of music is made up of movements, sections, phrases, and ultimately single notes. Consequently, our visualization is thus capable of representing both the macro level—musical form—and the micro—the rhythmic details—within a unified visual framework.

3. Method

3. 1. System Architecture

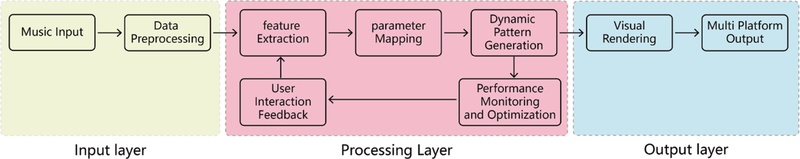

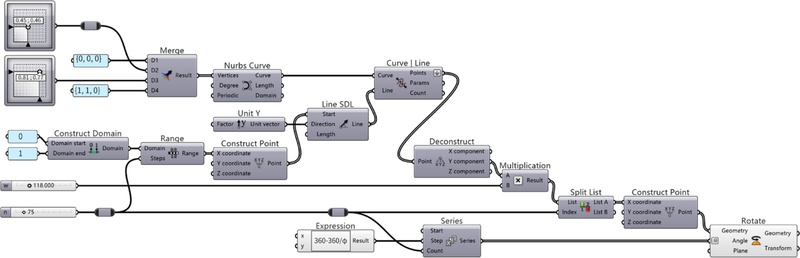

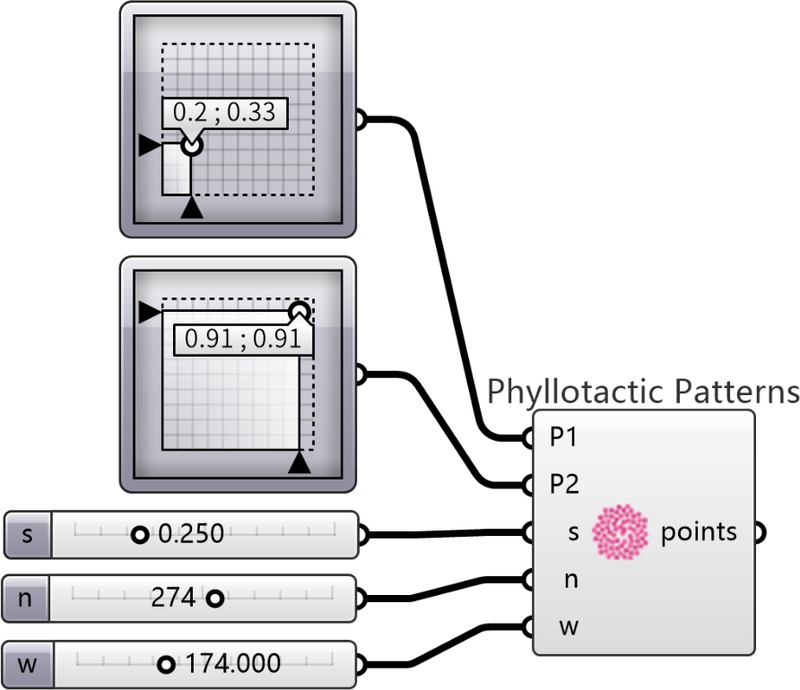

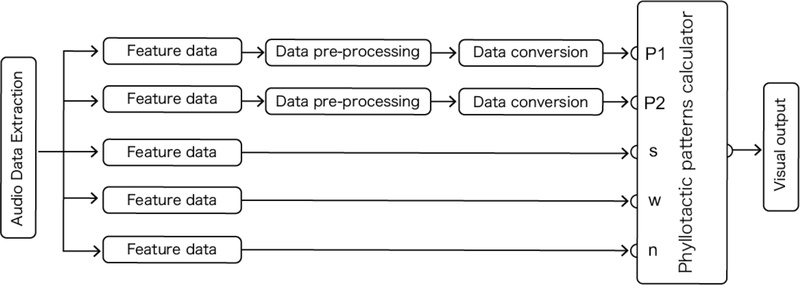

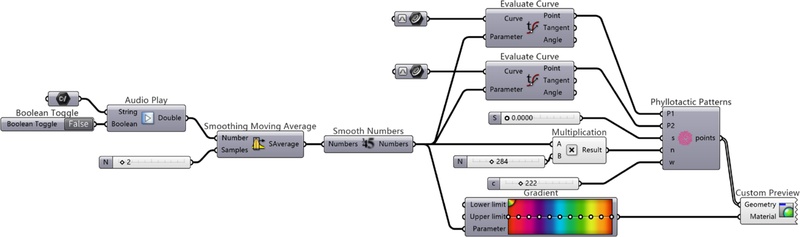

To implement the music-driven dynamic phyllotaxis generation algorithm, this study employed Rhino and Grasshopper as the core development environments. Grasshopper’s node-based programming interface supports real-time parameter adjustment and dataflow visualization, facilitating the dynamic binding of mathematical modules (such as Vogel’s model and Bézier functions) with music input signals. Rhino’s NURBS geometric kernel efficiently handles the real-time rendering of complex spiral structures, meeting the dual requirements in music visualization of frame rate (≥30 fps) and graphical precision (submillimeter error). The openness of the Grasshopper component library allows the direct integration of third-party plugins (e.g., Butterfly for fluid dynamics simulation, TT Toolbox for data mapping), simplifying the cross-domain transformation process from musical features to morphological parameters. The widespread application of Rhino/Grasshopper in parametric architecture and generative art (Dean & Loy, 2020; Saadi & Yang, 2023) ensured that this research rested on a mature foundation of user testing and community support. This choice of platform facilitates the development of an intelligent interactive system, as it enables real-time parameter adjustment and visual feedback, a core requirement for interactive music information systems (Liao, 2022). Figure 1 shows the system architecture diagram of the dynamic phyllotaxis visualization system based on Rhino/Grasshopper.

3. 2. Dynamic Phyllotaxis Modeling

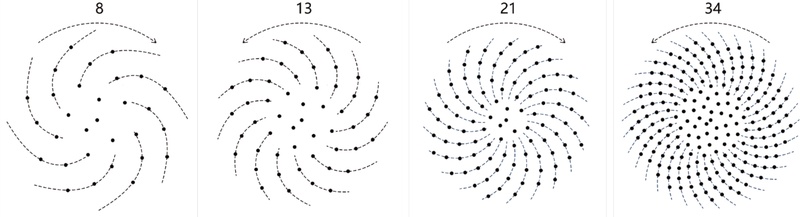

The sunflower has a Fibonacci spiral phyllotaxis, as explained in Section 2.3 (Figures 2 and 3). Our visualization model is built on this pattern, which is ruled by the golden angle.

Adaptation to Dynamic Visualization

The classical Vogel’s model, defined by

| (1) |

| (2) |

(where δ is the divergence angle, typically 137.5°), will lead to an equilibrium optimal-packing arrangement. To modify this model to enable real-time music visualization, we analyzed the parameters of the model to determine those that could be controlled dynamically to reflect the musical input without compromising the underlying aesthetic and structural integrity of the phyllotactic pattern.

Our adaptation reinterpreted the core parameters as functions of musical data:

Dynamic Divergence Angle (δ): For the static model δ is constant. We treated it as a time-varying parameter δ(t) modulated by rhythmic intensity and the spectral centroid for timbre. This allows the tightness and general curve of the spiral to “breathe” in response to the agencies of energy and brightness of the music. For example, an increase in rhythmic intensity can lead to an increase of δ(θ), either linear or nonlinear (using the two extremes of the Bézier mapper), which can cause the spiral to expand more rapidly.

Dynamic Radial Scaling (c): The scaling factor c in the radial factor regulates the point density. We modulated the parameter by the amplitude envelope and pitch height. Most passages can be arranged in a way that enhances c, leading to less dense, more open patterns that imitate a reaction to some kind of energy or frequency.

Temporal Sequencing: The index n is not a point counter but a time sequence in the generation process. When new musical data are received in real-time, new points are added with coordinates (r(n,t),θ(n,t)), where the parameters c and δ have been evaluated at a given time t to create a pattern that evolves with time.

This analysis and adaptation transforms the Vogel model, a formula to generate fixed images, into a dynamic system Phyllotaxis(r(t),θ(t)) that generates visual output as a direct function of the incoming musical stream.

3. 3. Bézier Curve Mapping

This decision to use cubic Bézier curves was not arbitrary factors, but lay in the recognition that the music-based visualization demands an expressive and computationally friendly mapping mechanism. Traditional linear mappings are more likely to not capture the delicate and nonlinear character of the relationships between musical features and visual equivalents. Bézier curves allow us to address this through the following significant qualities, all of which address a vital requirement of our system:

Parametric Control and Expressive Nonlinear Mapping: The shape of a Bézier curve is determined by the locations of its control points P1 and P2. This provides a flexible method for developing nonlinear mappings of input (musical feature value) and output (morphological parameter). A linear change in the coordinate of a control point can result in a complicated curvilinear change to the resulting pattern. This allows the production of visually interesting and nonmechanical transformations that transcend a simple one-to-one correspondence.

Higher-Order Continuity (C2): Temporal Smoothing Music is a temporal art form. Sudden shots in the visual domain may be shocking in a song. Cubic Bézier curves are C2-continuous, meaning that their first and second derivatives (speed and acceleration of change) are everywhere continuous. This ensures smooth visual transitions despite rapid changes in the input musical data (e.g., over a drum break or a crescendo) and does not have the visual tearing-up associated with piecewise-linear or step-function mappings.

Multidimensional Parameter Coupling: The control points P1 and P2 are control points situated in a 2D (or 3D) space, and each x- or y-coordinate is an independent degree of freedom. This allows for the synergistic coupling of a number of musical features into one coherent morphological change. As an example, rhythm could be associated with the x-coordinate of P1 and the timbre with its y-coordinate, which would be combined in a single control point to create a multilayered, intricate visual reaction.

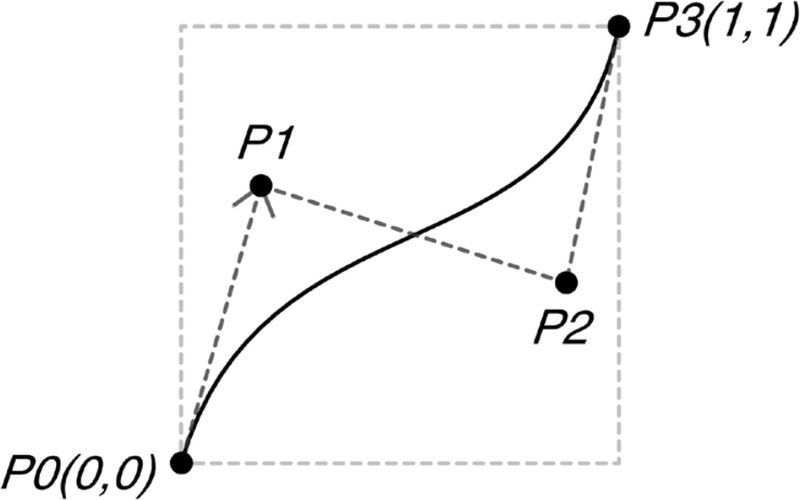

The shape of a cubic four-point Bézier curve is defined by four points (P0, P1, P2, P3) in 2D or 3D space (Farin, 2002; Khanteimouri et al., 2022; Piegl, 1991). As shown in Figure 4, these points control the data range: P0 and P3 are fixed at coordinates (0,0) and (1,1), respectively, scaling the input/output data range from o to 1. The coordinates of P1 and P2 serve as controllable inputs and function as variable parameters (Böhm et al., 1984; Farin, 2002).

Mapping Musical Features to Control Points

The process of a concrete music-visual mapping of an abstract curve is conducted by attributing definite musical semantics to the coordinates of the control points P1 and P2. The rationale behind this design is as follows:

Control Point P1 as the “Rhythm and Energy” Modulator: The location of P1, especially the x-coordinate, mostly influences the overall curvature and tension of the phyllotactic spiral. Thus, it is mapped to features associated with rhythmic intensity and amplitude (loudness). Cross-modal similarities in this mapping are intuitively justified by the fact that increased intensity/energy is crossmodally correlated with increased or broadened visual dimensions (Spence, 2011).

Control Point P2 as the “Pitch and Texture” Modulator: The y-coordinate of P2 is used to control the local density and texture at the edges of the pattern. The value of this coordinate is determined by the height of the pitch and spectral centroid (brightness) of the timbre. This schema is based on well-established cross-modal correspondences, including the pitch-height effect, whereby higher pitched sounds are always linked with higher spatial positioning, and the relationship between auditory brightness and visual lightness or elevation (Spence, 2011). This strategic mapping approach employs the abstract mathematical values of the Bézier curve as valuable channels for musical expression and the direct connection of sonic qualities to visual morphology.

Figure 5 shows the mapping process of curves using the points (0,0), P1, P2, and (1,1) to create the Bézier curve. P1 and P2 are controlled through a multidimensional slider input to produce the desired curves.

In addition, this curve control algorithm was sent out in a reusable form that works with the five input variables of the control points P1 and P2, array start value s, pattern width w, and point count n. The relationship between these elements is indicated in Figure 6. Visual patterns naturally evolve when the values are dynamically changed based on real-time input data to create morphological flows in step with the music.

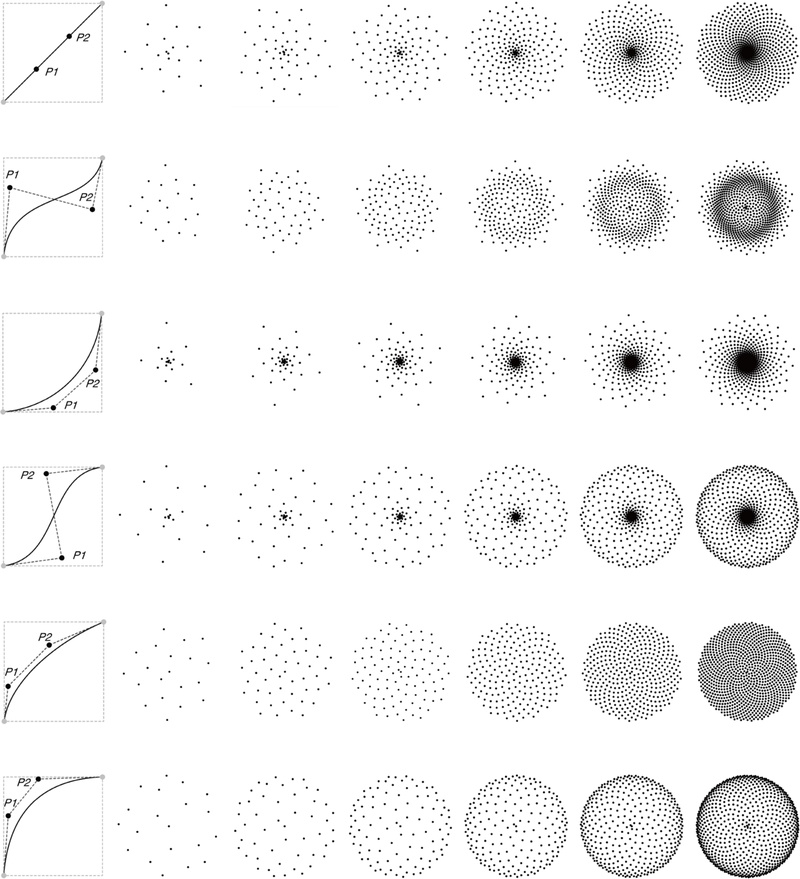

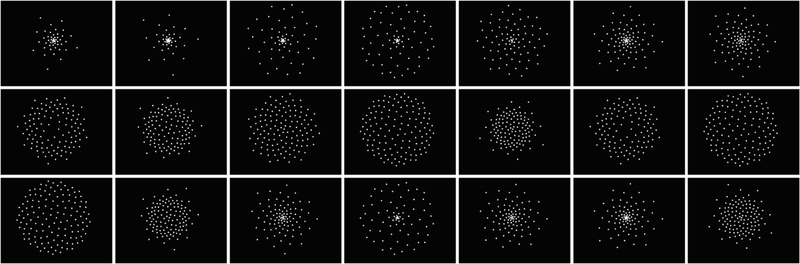

The Bézier-controlled dynamic phyllotaxis model exhibits sensitive visual transformations according to the input parameters that enable pattern sculpting suitable for music visualization. This section presents a wide range of the visual patterns generated through various combinations of point count n, initial spacing s, and control point positions P1, P2.

Figure 7 displays spiral patterns generated by varying n and the coordinates of P1, P2. Both control points reside within a square domain diagonally bounded by (0,0) and (1,1), where their positions critically influence the point distribution density and curvature morphology.

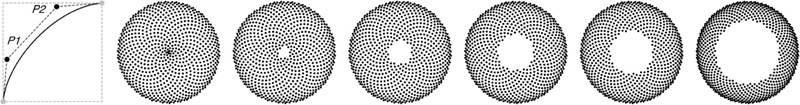

Figure 8 demonstrates the effects of varying the value of the initial array spacing s. When s > 0, a central gap emerges in the pattern, expanding proportionally with larger values of s. This adjustment breathes life into visual patterns and proves effective for visually representing beat intervals or rests (pauses) in music.

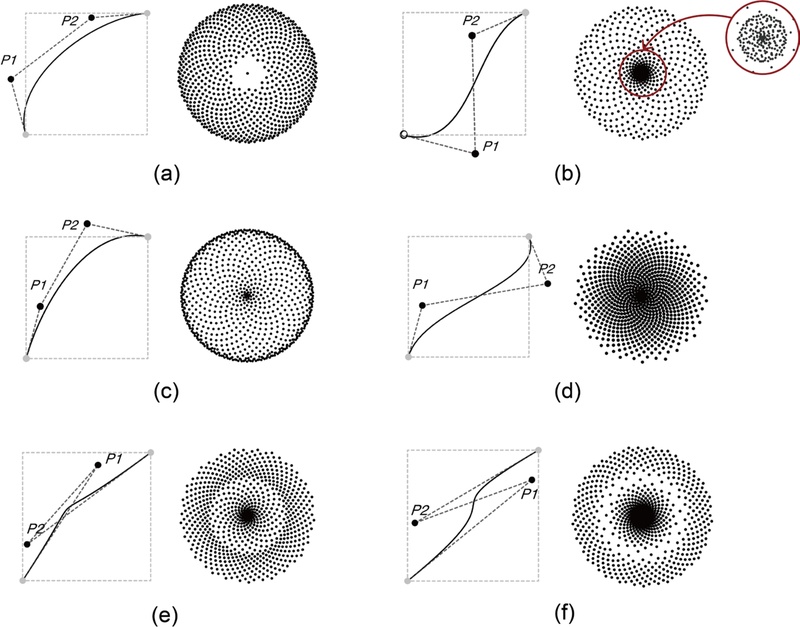

However, when the control points fall outside the boundaries of the square, unintended defects in visual patterns may occur. Figure 9 provides a visualization of such errors:

(a) If P1 deviates leftward, the central structure vanishes (resembling increased s-value effects).

(b) If P1 falls below the lower boundary, points randomly overlap.

(c) If P2 falls above the upper boundary, the entire pattern abnormally enlarges.

(d) If P2 deviates rightward, the pattern becomes excessively compressed.

(e),(f) If the control lines for P1 and P2 intersect or show a kink, the curvature continuity becomes defective.

These results indicate that stable patterns can only be generated when the control points are constrained to fall within the square domain, thereby ensuring predictable visual representations in real-time music visualization.

3. 4. Music Feature Processing

The accuracy, computational speed, and complexity of the algorithm used for feature extraction in music analysis are important factors dictating the results of visualization. Overall, the audio content is divided into low-level descriptors, including amplitude, frequency, and phase, and high-level attributes, including pitch, emotion, timbre, and rhythmic structure (Lima et al., 2022); however, some of the higher-level features are computationally unstable or computationally intensive. The most widespread issue is the successful conversion of these features, especially such delicate aspects as timbre, into a visual form, which continues to be the subject of research (Cantareira et al., 2016). Recent strategies have gone beyond low-level descriptors and added semantic words to describe the perceptual aspects of timbre (Soraghan et al., 2018). The current study follows this trend in the attempt to define an instinctive map between the timbre and the organic morphology imposed by phylotactic patterns.

Despite its broad use in the visualization field, the structure of the MIDI format (command-based architecture) limits the options for the quantitative extraction of loudness and prevents the extraction of the emotional nuances and dynamic variations of sound pressure observed in natural audio sources (Khulusi et al., 2020). As a result, we introduced natural audio into the study for greater realism and better scaling. The natural audio comprises a wide range of audio sources, such as speech and environmental sounds, and therefore promotes the suitability of the system in performance, interactive installation, and context-driven visualization environments.

The system uses the Audio Play component of the Grasshopper’s Mosquito plugin to extract real-time waveform data. This component outputs amplitude peak values as a time sequence of double-precision floating-point numbers. Since this format is incompatible with conventional visualization model inputs, data type conversion and normalization are essential.

Figure 10 summarizes the data processing workflow required for the Bézier-based Fibonacci visualization model.

Using this workflow, every port is handled as follows:

P1, P2 Ports: The two-dimensional coordinates of the audio waveform sample are converted to control-point coordinates of a square space.

s Port: Audio Play only accepts single-track input; thus, the default value of center spacing is set at 0.

n Port: Data of the amplitude of the input (increasing or decreasing with time) are modeled with curved values to manage the actual number of points in real time.

w Port: This is set to a fixed value to match the visual range of expression.

Smoothing and Calibration: Sudden changes in the data within very short periods are alleviated to maintain a gradual and continuous development of visual patterns.

The audio information extracted in music visualization tends to be discrete and abrupt. Their mere overlay onto visual patterns might thus result in discontinuous patterns or a visual crash, a common negative effect in real-time systems that may ruin the user experience (Li, 2022). In order to address this, a multistage data-smoothing algorithm was employed in the present study to preserve rhythmic perception while creating smooth and continuous visual transitions. Although one of the steps in preprocessing is the smoothing process, the parameters of this process must be properly adapted to the conditions of real-time interactive visualization so as to reduce undesirable noise to a minimum while at not creating any observable latency that will lead to a break in the visual and musical rhythm.

Three typical strategies (smoothing strategies) were compared against two major performance criteria to determine the best smoothing strategy:

Criterion 1 (Visual Stability): Visual stability and the capability of blocking high-frequency jitter to create smooth and natural transitions between patterns.

Criterion 2 (Temporal Responsiveness): Minimization of processing time to ensure a very strong relationship between beat sounds and visual transitions.

The following strategies were considered:

Moving average smoothing: Rapid in terms of stability but with a delay proportional to the size of the window. Any window larger than five samples introduced a noticeable latency between the visualization and the music.

Time delay smoothing: Adds an average latency. The perception of real-time synchronization was disrupted even in the case of short delays longer than 0.3 seconds.

Combined Moving-Average + Time-Delay Strategy : The perception of real-time synchronization was disrupted even in the case of short delays longer than 0.3 seconds.

Justification for the Selected Hybrid Strategy

Based on iterative testing and observation of the visual output, the hybrid strategy was selected for the final system because it provided a superior balance of our two criteria:

First, it provided adequate visual stability by effectively damping the most disruptive high-frequency jitter, thereby meeting Criterion 1.

Second, the minimal parameters kept the overall latency below a perceptually critical threshold for most musical tempos, preserving the essential impression of real-time response and satisfying Criterion 2.

In contrast, using either method alone with stronger settings (larger window or longer delay) to achieve similar stability resulted in unacceptable rhythmic latency. Therefore, the hybrid strategy with particular parameters (2 samples, 0.5 s) was implemented, as it provided the optimal compromise for a cohesive and responsive audiovisual experience. This configuration was used consistently in all subsequent experiments and user evaluations.

These smoothing algorithms were made available in Grasshopper as custom components intended to fit into the data flow and visualization operations. The final data-processing component is presented in Figure 11. With the help of this part, the research developed numerous random curves and graphically experimented with patterns that change in real time according to musical rhythm. As a result, although all curves generated different patterns, they were closely synchronized with the musical beats, which provided the emotional impact of a visual experience.

Figure 12 presents an example of music visualization as implemented with our system. By mapping real-time musical data to such parameters as the number of points (n), curvature control points (P1, P2), and color variations, we confirmed that the visual patterns organically transform according to the rhythm and emotion of the music (Refer to the accompanying video file for demonstration.).

4. Evaluation

The study adopted a dual-assessment protocol to compare the proposed phyllotaxis pattern-based visualization algorithm with respect to technical functionality and user experience.

4. 1. Technical Performance

Each model was applied in the same Rhino/Grasshopper environment and run with the same hardware configuration (Intel i7-11800H / RTX 3060 / 32GB RAM) to guarantee a fair and repeatable comparison. The compared models were set as follows:

The proposed model (Bézier-weighted dynamic phyllotaxis) was applied as explained in Section 3.

In Baseline 1 (Traditional Vogel Model), we used the formula of Vogel (statics); to allow a performance comparison of the same number of elements rendered over time, we adjusted it to produce the same total number of points (n) per frame as in our dynamic model when driven by the same audio signal, which isolates the computational cost of the layout algorithm itself.

Baseline 2 (Particle System) offers the implementation of a standard CPU-based particle system according to Fonteles et al. (2013), in which the particles are emitted depending on amplitude and move according to simple physics, which is an alternative way of visualizing music.

The metrics below were selected because they are directly related to real-time interactive applications:

Latency is important in live performances because high latency will cause the visuals to lag and be out of sync with the music, thus ruining immersion.

Curvature Range: The wider the range, the better able the visualization is to depict a large range of musical “textures” and “dynamics” (i.e., smooth, gentle curves versus aggressive, twisting lines), and therefore the greater the expressive ability.

Branch Rate is the ability of the system to generate complex geometry in real-time; a higher branch rate is required to generate dense and complex visual patterns that can update in response to complex musical passages without losing frames. It is directly related to one of the fundamental concepts of real-time computer graphics, that the effective management of geometric complexity is key to maintaining interactive frame rates, which has been formalized in the literature on Level of Detail methods (Luebke et al., 2003).

4. 2. Results and Discussion

The results show statistically significant differences across all metrics, as detailed in Table 1.

4. 3. User Perception Study

We adopted a controlled comparative study to appropriately contextualize user feedback in which the proposed dynamic visualization of phyllotaxis was compared to the control condition of a classical visualization of a spectrogram.

Methods:

A total of 50 volunteers took part in this research (11 male, 39 female), and 33 (66%) of the volunteers had experience in music visualization or design. The stimuli were three music samples (slow-tempo, high-rhythm, and real-time input), which were visualized with the help of (A) our proposed method and (B) the spectrogram control. This study employed a within-subjects design counterbalanced in presentation order. The visualizations were assessed using the following statements scored on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree):

(1) the visualization was accompanied by the music very well; (2) the visual style was a good indication of the emotional variation in the music; (3) the visualization was graphically attractive and appealing to the eye; and (4) the visualization was useful for hearing structural aspects of music (e.g., rhythm and intensity changes).

Results:

To compare the ratings each evaluation item for the two visualization methods, paired-sample t-tests were used. Table 2 shows the average ratings and their statistical significance.

The suggested technique scored higher than the spectrogram control on three of the four items of evaluation. Music synchronization was significantly better (M = 4.3 vs. 3.5, p <.01), which means that participants felt that the phyllotaxis visualization was more directly related to musical events in time, and the most significant difference was in emotional reflection (M= 4.1 vs. 2.9, p =.001), implying that the organic, nature-inspired patterns were more effective in expressing the emotional aspect of the music. There were also higher ratings of visual engagement and aesthetics (M = 4.4 vs. 3.2, p <.001), and the proposed approach was rated as more visually appealing. The difference in the perception of musical structure, however, was not significant (M = 3.8 vs. 3.7, p =.25), which is understandable since spectrograms are specifically created to analytically represent audio signals. In general, the average rating of the proposed method was much higher (M = 4.15 vs. 3.33, p <.001).

5. Discussion

The above experimental results require careful interpretation. In terms of technical performance, the proposed model showed a statistically significant decrease in latency from the traditional Vogel model (16 ms vs. 82 ms, p < 0.001). This reflects the fact that the architecture of our model is more susceptible to parallelization on the GPU than the sequential characteristics of the baseline Vogel model, which puts a greater load on the CPU. Our model has a dynamically adjustable curvature range (0.05–0.35) relative to the fixed curvature (0.12) of the baseline, which offers a measurably expandable expressive range. Moreover, the increased rate of the branch (12,000/s vs. 800/s) verifies the capability of our model to produce rich and dense visual content in real time, in contrast to the CPU-limited baseline.

Regarding user perceptions, we found that our proposed approach scored much higher than the spectrogram control in synchronization, emotional reflection, and visual engagement. These subjective accounts of increased emotional and immersive experiences might have a plausible neurophysiological explanation in the well-reported effect of music on brain functions. Numerous electroencephalographic (EEG) studies have demonstrated that music can significantly influence brainwave activity and is directly linked to emotional and cognitive states (El Sayed et al., 2025). The good user reviews of our nature-based visualization indicate that it can exploit this innate neural reaction to music for a more unified and effective audiovisual stimulus. It is also interesting to note that the greatest differences were in the areas of emotional reflection (p < 0.001) and visual engagement (p < 0.001). This might imply that the ability of our nature-based method to more directly express musical emotion and produce aesthetically interesting visual images can make up for the expressive shortcomings of traditional mechanistic visualizations. The lack of a significant difference in the perception of musical structure reflects the fact that spectrograms are specially created to conduct such analysis. These findings were supported by qualitative feedback from users that they found the phyllotaxis visualization to be more organic, emotional, and highly engaging than the more technical and abstract spectrogram.

Our results indicate possible advantages over currently available music visualization methods. Our phyllotaxis-based approach to particle systems provides the sense of natural organic growth, which better reflects the temporal behavior of music than traditional particle systems that use mechanistic parameter mappings. In contrast to fixed fractal visualizations (Jiang et al., 2019), our dynamic model is adaptive and responsive, which is necessary for conveying musical emotion in real time.

In the realm of theory, this study contributes to HCI and visualization design by providing: (1) a framework linking dynamic natural processes (not only static patterns) with real-time data streams; (2) an example of how Bézier curves can be used effectively as nonlinear mappers of cross-modal expression; and (3) empirical evidence that nature-inspired visual metaphors may enhance the emotional resonance of data visualization.

In practice, this system can be used in a variety of fields: Live music performances can use such visualizations to better engage the audience; VR/AR spaces can use organic patterns to create a sense of immersion; and music therapy and learning can use the similarity between visual and musical patterns to help students better understand musical structure and emotion.

Nevertheless, several limitations should be noted. The current implementation using low-end hardware demonstrates poor performance, and the system is sensitive to highly transient audio input. Additionally, our evaluation focused on technical performance and immediate user responses and did not fully address longer-term aesthetic engagement or perceptual impact. Future studies will employ a broader range of evaluation methods, including qualitative approaches. The next stage of research will focus on algorithmic optimization, adaptive smoothing techniques, and parameter tuning using machine learning to enhance stability and accessibility. Another area where we intend to expand the framework is VR/AR spaces and educational and therapeutic applications, where a high level of intuitive audiovisual correspondence is essential.

6. Conclusion

This study was inspired by the recognized shortcomings of traditional music visualization techniques in rendering the structural integrity, rhythmic richness, and emotional richness of musical works. To this end, we developed a dynamic phyllotaxis-like algorithm that combines the space organization principles of the Vogel model with the parametric capacities of cubic Bézier curves. This system maps musical characteristics, e.g., rhythm, timbre, and emotional intensity, onto changing spiral-shaped visual images in real time.

As our experimental findings indicate, this approach differs from other methods, such as the traditional Vogel models and particle systems, along several dimensions. As the system is technically low latency ([?]16 ms), morphologically diverse, and computationally reliable, it can be used in real-time interactive applications. Users rated it higher than traditional spectrogram-based visualizations in emotional resonance, visual engagement, and aesthetic coherence.

These results support the importance of implementing biologically inspired generative algorithms in visualization design. In addition to the fact that expressive capacity can be enhanced with organic, growth-based models, our approach can improve the perceptual and affective connections between sound and image. This correspondence between the self-organization of natural patterns and the structure of music offers a conceptual basis for the creation of more emotionally moving and aesthetically intuitive audiovisual media.

Overall, our study provides a technical structure and a design paradigm directed toward better music-based visualization drawing on nature-inspired generative patterns. It offers a way to create more embodied, expressive, and meaningful audiovisual experiences at the intersection of computational design, perceptual studies, and interactive media arts. In future research, we will conduct longitudinal studies and comparisons with other contemporary visualization techniques, such as particle systems and fractal-based methods using broader systems of evaluation.

Acknowledgments

We used GPT-5.2 (OpenAI) to help us translate the Chinese text into English and to refine English expression refinement and check the grammar. All translated material was reviewed and edited by the authors.

Notes

Copyright : This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License (http://creativecommons.org/licenses/by-nc/3.0/), which permits unrestricted educational and non-commercial use, provided the original work is properly cited.

References

-

Adler, I. (1997). A history of the study of phyllotaxis. Annals of Botany, 80(3), 231-244.

[https://doi.org/10.1006/anbo.1997.0422]

-

Bale, A. S., Tiwari, S., Khatokar, A., N, V., & Mohan M S, K. (2021). Bio-inspired computing-a dive into critical problems, potential architecture and techniques. Trends in Sciences, 18(23), 703.

[https://doi.org/10.48048/tis.2021.703]

-

Bergstrom, T., Karahalios, K., & Hart, J. C. (2007). Isochords: Visualizing structure in music. Proceedings of Graphics Interface 2007 on - GI' 07, 297.

[https://doi.org/10.1145/1268517.1268565]

-

Böhm, W., Farin, G., & Kahmann, J. (1984). A survey of curve and surface methods in CAGD. Computer Aided Geometric Design, 1(1), 1-60.

[https://doi.org/10.1016/0167-8396(84)90003-7]

-

Cantareira, G. D., Nonato, L. G., & Paulovich, F. V. (2016). MoshViz: A detail+overview approach to visualize music elements. IEEE Transactions on Multimedia, 18(11), 2238-2246.

[https://doi.org/10.1109/TMM.2016.2614226]

- Carter-Enyi, A., Rabinovitch, G., & Condit-Schultz, N. (2021). Visualizing intertextual form with arc diagrams: Contour and schema-based methods. ISMIR, 74-80.

-

Ciuha, P., Klemenc, B., & Solina, F. (2010). Visualization of concurrent tones in music with colours. Proceedings of the 18th ACM International Conference on Multimedia, 1677-1680.

[https://doi.org/10.1145/1873951.1874320]

-

De Prisco, R., Malandrino, D., Pirozzi, D., Zaccagnino, G., & Zaccagnino, R. (2017). Understanding the structure of musical compositions: Is visualization an effective approach? Information Visualization, 16(2), 139-152.

[https://doi.org/10.1177/1473871616655468]

-

Dean, L., & Loy, J. (2020). Generative product design futures. The Design Journal, 23(3), 331-349.

[https://doi.org/10.1080/14606925.2020.1745569]

-

El Sayed, B. B., Basheer, M. A., Shalaby, M. S., El Habashy, H. R., & Elkholy, S. H. (2025). The power of music: Impact on EEG signals. Psychological Research, 89(1), 42.

[https://doi.org/10.1007/s00426-024-02060-6]

-

Farin, G. (2002). A history of curves and surfaces in CAGD. In G. Farin, J. Hoschek, & M.-S. Kim (Eds.), Handbook of computer aided geometric design (pp. 1-21). Amsterdam: Elsevier.

[https://doi.org/10.1016/B978-044451104-1/50002-2]

-

Fonteles, J. H., Rodrigues, M. A. F., & Basso, V. E. D. (2013). Creating and evaluating a particle system for music visualization. Journal of Visual Languages & Computing, 24(6), 472-482.

[https://doi.org/10.1016/j.jvlc.2013.10.002]

-

Fowler, D. R., Hanan, J., & Prusinkiewicz, P. (1989). Modelling spiral phyllotaxis. Computers & Graphics, 13(3), 291-296.

[https://doi.org/10.1016/0097-8493(89)90076-9]

-

Fowler, D. R., Prusinkiewicz, P., & Battjes, J. (1992). A collision-based model of spiral phyllotaxis. Proceedings of the 19th Annual Conference on Computer Graphics and Interactive Techniques, 361-368.

[https://doi.org/10.1145/133994.134093]

-

Frenkel, M., Legchenkova, I., Shvalb, N., Shoval, S., & Bormashenko, E. (2023). Voronoi diagrams generated by the archimedes spiral: Fibonacci numbers, chirality and aesthetic appeal. Symmetry, 15(3), 746.

[https://doi.org/10.3390/sym15030746]

-

Gokmen, S. (2022). Computation and optimization of structural leaf venation patterns for digital fabrication. Computer-Aided Design, 144, 103150.

[https://doi.org/10.1016/j.cad.2021.103150]

-

Hermann, T., & Ritter, H. (2004). Sound and meaning in auditory data display. Proceedings of the IEEE, 92(4), 730-741.

[https://doi.org/10.1109/JPROC.2004.825904]

-

Ijiri, T., Owada, S., Okabe, M., & Igarashi, T. (2005). Floral diagrams and inflorescences: Interactive flower modeling using botanical structural constraints. ACM SIGGRAPH 2005 Papers, 720-726.

[https://doi.org/10.1145/1186822.1073253]

-

Jiang, Y., Li, C., & Wang, Z. (2019). Real-time sound visualization with touch OSC: Stimulating Sensibility through rhythm in nature.

[https://doi.org/10.25236/iclahd.2019.013]

-

Khanteimouri, P., Mandad, M., & Campen, M. (2022). Rational Bézier guarding. Computer Graphics Forum, 41(5), 89-99.

[https://doi.org/10.1111/cgf.14605]

-

Khulusi, R., Kusnick, J., Meinecke, C., Gillmann, C., Focht, J., & Jänicke, S. (2020). A survey on visualizations for musical data. Computer Graphics Forum, 39(6), 82-110.

[https://doi.org/10.1111/cgf.13905]

-

Kuhlemeier, C. (2007). Phyllotaxis. Trends in Plant Science, 12(4), 143-150.

[https://doi.org/10.1016/j.tplants.2007.03.004]

- Kumari, D. K. M. (2016). Expression of Fibonacci sequences in plants and animals. Bulletin of Mathematics and Statistics Research, 4(1), 27-35. Retrieved from http://www.bomsr.com.

-

Lehtiniemi, A., & Holm, J. (2012). Using animated mood pictures in music recommendation. 2012 16th International Conference on Information Visualisation, 143-150.

[https://doi.org/10.1109/IV.2012.34]

-

Li, L. (2022). Research and implementation of real time music visualization. Second International Symposium on Computer Technology and Information Science (ISCTIS 2022), 99.

[https://doi.org/10.1117/12.2654065]

-

Li, W., & Li, J. (2020). Research on music visualization based on graphic images and mathematical statistics. IEEE Access, 8, 100652-100660.

[https://doi.org/10.1109/ACCESS.2020.2999106]

-

Liao, N. (2022). Research on intelligent interactive music information based on visualization technology. Journal of Intelligent Systems, 31(1), 289-297.

[https://doi.org/10.1515/jisys-2022-0016]

-

Lima, H. B., Santos, C. G. R. D., & Meiguins, B. S. (2022). A survey of music visualization techniques. ACM Computing Surveys, 54(7), 1-29.

[https://doi.org/10.1145/3461835]

-

Lintermann, B., & Deussen, O. (1999). Interactive modeling of plants. IEEE Computer Graphics and Applications, 19(1), 56-65.

[https://doi.org/10.1109/38.736469]

-

Lokki, T., Savioja, L., Vaananen, R., Huopaniemi, J., & Takala, T. (2002). Creating interactive virtual auditory environments. IEEE Computer Graphics and Applications, 22(4), 49-57.

[https://doi.org/10.1109/MCG.2002.1016698]

-

Luebke, D., Reddy, M., Cohen, J. D., Varshney, A., Watson, B., & Huebner, R. (2003). Level of detail for 3D graphics. San Francisco, CA: Morgan Kaufmann.

[https://doi.org/10.1016/B978-155860838-2/50003-0]

-

Lyu, Y., Yu, H., Hu, Y., Shu, Q., & Wang, J. (2021). Bionic design for the heat sink inspired by phyllotactic pattern. Proceedings of the Institution of Mechanical Engineers, Part C: Journal of Mechanical Engineering Science, 235(16), 3087-3094.

[https://doi.org/10.1177/0954406220959094]

-

Machida, W., & Itoh, T. (2011). Lyricon: A visual music selection interface featuring multiple icons. 2011 15th International Conference on Information Visualisation, 145-150.

[https://doi.org/10.1109/IV.2011.62]

-

Mathai, A. M., & Davis, T. A. (1974). Constructing the sunflower head. Mathematical Biosciences, 20(1-2), 117-133.

[https://doi.org/10.1016/0025-5564(74)90072-8]

-

Miyazaki, R., Fujishiro, I., & Hiraga, R. (2003). Exploring MIDI datasets. ACM SIGGRAPH 2003 Sketches & Applications, 1-1.

[https://doi.org/10.1145/965400.965453]

- Neumann, P., Carpendale, S., & Agarawala, A. (2006). PhylloTrees: Phyllotactic patterns for tree layout. Proceedings of the Eighth Joint Eurographics/IEEE VGTC Conference on Visualization, 59-66.

-

Newell, A. C., & Shipman, P. D. (2005). Plants and fibonacci. Journal of Statistical Physics, 121(5-6), 937-968.

[https://doi.org/10.1007/s10955-005-8665-7]

-

Niklas, K. J. (1988). The role of phyllotactic pattern as a "developmental constraint" on the interception of light by leaf surfaces. Evolution, 42(1), 1-16.

[https://doi.org/10.1111/j.1558-5646.1988.tb04103.x]

-

Owens, A., Cieslak, M., Hart, J., Classen-Bockhoff, R., & Prusinkiewicz, P. (2016). Modeling dense inflorescences. ACM Transactions on Graphics, 35(4), 1-14.

[https://doi.org/10.1145/2897824.2925982]

-

Piccini, D., Littmann, A., Nielles-Vallespin, S., & Zenge, M. O. (2011). Spiral phyllotaxis: The natural way to construct a 3D radial trajectory in MRI. Magnetic Resonance in Medicine, 66(4), 1049-1056.

[https://doi.org/10.1002/mrm.22898]

-

Piegl, L. (1991). On NURBS: A survey. IEEE Computer Graphics and Applications, 11(1), 55-71.

[https://doi.org/10.1109/38.67702]

-

Prusinkiewicz, P., & Lindenmayer, A. (1990). The algorithmic beauty of plants. Springer New York. http://link.springer.com/10.1007/978-1-4613-8476-2.

[https://doi.org/10.1007/978-1-4613-8476-2]

-

Prusinkiewicz, P., Mündermann, L., Karwowski, R., & Lane, B. (2001). The use of positional information in the modeling of plants. Proceedings of the 28th Annual Conference on Computer Graphics and Interactive Techniques, 289-300.

[https://doi.org/10.1145/383259.383291]

-

Reeves, W. T. (1983). Particle systems: A technique for modeling a class of fuzzy objects. ACM Transactions on Graphics, 2(2), 91-108.

[https://doi.org/10.1145/357318.357320]

-

Ridley, J. N. (1982). Packing efficiency in sunflower heads. Mathematical Biosciences, 58(1), 129-139.

[https://doi.org/10.1016/0025-5564(82)90056-6]

-

Ridley, J. N. (1986). Ideal phyllotaxis on general surfaces of revolution. Mathematical Biosciences, 79(1), 1-24.

[https://doi.org/10.1016/0025-5564(86)90013-1]

-

Saadi, J. I., & Yang, M. C. (2023). Generative design: Reframing the role of the designer in earlystage design process. Journal of Mechanical Design, 145(4), 041411.

[https://doi.org/10.1115/1.4056799]

- Sarradj, E. (2015). Optimal planar microphone array arrangements. Fortschritte Der Akustik, DAGA 2015, Nürnberg, 41. Jahrestagung Für Akustik, March 16-19, 2015.

-

Soraghan, S., Faire, F., Renaud, A., & Supper, B. (2018). A new timbre visualization technique based on semantic descriptors. Computer Music Journal, 42(1), 23-36.

[https://doi.org/10.1162/comj_a_00449]

-

Spence, C. (2011). Crossmodal correspondences: A tutorial review. Attention, Perception, & Psychophysics, 73(4), 971-995.

[https://doi.org/10.3758/s13414-010-0073-7]

-

Stein, B. E., & Stanford, T. R. (2008). Multisensory integration: Current issues from the perspective of the single neuron. Nature Reviews Neuroscience, 9(4), 255-266.

[https://doi.org/10.1038/nrn2331]

-

Swinbank, R., & James Purser, R. (2006). Fibonacci grids: A novel approach to global modelling. Quarterly Journal of the Royal Meteorological Society, 132(619), 1769-1793.

[https://doi.org/10.1256/qj.05.227]

-

Valbom, L., & Marcos, A. (2007). An immersive musical instrument prototype. IEEE Computer Graphics and Applications, 27(4), 14-19.

[https://doi.org/10.1109/MCG.2007.76]

-

Viégas, F. B., & Wattenberg, M. (2007). Artistic data visualization: Beyond visual analytics. In D. Schuler (Ed.), Online communities and social computing (pp. 182-191). Berlin, Heidelberg: Springer.

[https://doi.org/10.1007/978-3-540-73257-0_21]

-

Vogel, H. (1979). A better way to construct the sunflower head. Mathematical Biosciences, 44(3-4), 179-189.

[https://doi.org/10.1016/0025-5564(79)90080-4]

-

Yeatts, F. R. (2004). A growth-controlled model of the shape of a sunflower head. Mathematical Biosciences, 187(2), 205-221.

[https://doi.org/10.1016/j.mbs.2003.09.002]

-

Zeng, L., & Wang, G. (2009). Modeling golden section in plants. Progress in Natural Science, 19(2), 255-260.

[https://doi.org/10.1016/j.pnsc.2008.07.004]